Data integration basics

Take any data project and data integration will sit in the centre of it, consuming vast amounts of the engineering effort (>70% of project time). This is because data integration involves numerous tasks such as data collection, cleansing, transformation, and consolidation, which are critical for ensuring that the data is accurate and usable for analysis or machine learning models. It’s highly likely it is the single most expensive ticket item in your D&A budget. Let’s have a look at why.

Simply, data integration is the process of combining data from different sources into a single, unified view. It involves taking data from different systems and blending it together. This makes the data more accessible and useful, as it’s all in one place and in a consistent format. Typical example, a business integrates sales data from its online store with customer information from its CRM system. The result is a comprehensive view of sales performance and customer behaviour.

Data integration is the heart of data engineering. The process is inherently complex and involves the amalgamation of data from disparate sources, ensuring its accessibility and utility across an organisation.

What’s in the data integration?

Let’s begin at the source, so to speak, where the data originates. One of the principal challenges in data integration is the diversity of data formats and structures. Data, sourced from various systems, often exists in different formats, e.g. structured, semi-structured, and unstructured data. This variance necessitates sophisticated mechanisms for data transformation and normalisation.

On top of that, data quality and consistency are paramount concerns. Is our data garbage again? Can we trust it today? Ensuring that integrated data maintains its accuracy and reliability poses a significant hurdle. We need to apply rigorous validation and cleansing processes across all steps of the pipeline (often manually!).

Then comes the business logic. We take the raw data and shape it to fit the needs of the organisation. What are our sales for the week? What are the key trends? Or whatever else you are trying to answer. The business logic can be applied “in-transit” or in a warehouse.

Once the transformations and quality checks have been done, the data needs to be persisted somewhere where the business can safely consume it. These days this is somewhere in the Cloud, but not always. And not necessarily stored in a single warehouse either! The devs must ensure consistency exists across all systems or you end up with disparities.

Data integration pipelines must be performant, efficient, and resilient. Especially if they support mission-critical decisions. The last thing you need is for the pipeline to fail in the middle of your busy sales period and lose you millions in revenue.

Time is money

The time required to cut through the integration challenges varies significantly based on the complexity of the data sources, the volume of data, and the specific requirements of the integration.

The creation of a data pipeline typically involves a team of professionals. This team comprises data engineers, data architects, and data analysts or scientists, depending on the pipeline’s complexity and purpose. On average, three to five individuals are involved in the development cycle.

The duration for building a data pipeline also varies. Simple pipelines can be developed in a few weeks, while more complex ones, especially those dealing with real-time data or integration with multiple systems, can take several months.

The engineers always have to be in top form when creating and maintaining production data pipelines. They are constantly neck-deep in development, optimisation and troubleshooting work. They spend most of their time wrangling data pipelines. A hive of activity.

Yet, all this herculean effort doesn’t come cheap. It is costing your business big $$. And it’s not necessarily giving you the full value for money.

Here’s a rough estimate

The average hourly rate for a data engineer in the UK ranges from £40 to £70. Assuming a moderately complex pipeline requiring a team of four professionals working for three months (about 480 hours assuming a 40-hour work week), the labour cost alone could range from £76,800 to £134,400. This is just labour cost for a single pipeline and excluding ongoing patching and maintenance work.

Even if you only use open-source tools, you will still be paying out that amount in labour costs.

One project we did had seventeen pipelines (inc. real-time streaming), so you can imagine the headline number the company had been quoted on the market for the work.

Build vs buy

As you can see, data integration work doesn’t come cheap. Production data pipelines are not easy to develop, update and maintain from scratch and require valuable engineering resources. You must simplify, automate and accelerate the integration tasks as much as possible.

The rule of thumb should always be to use the tech that minimises your total data integration cost. Remember, free software doesn’t always mean you are saving money if it takes a lot of dev time to work with it.

Data pipelines are not a source of your competitive advantage. They are a means to an analytical end. Generic, commoditised. There is no magic to them. Extract the data, apply business logic, check for quality, monitor and optimise performance, test and deposit the processed data somewhere for consumption. Batch or real-time – same principles apply. Nothing special, just grunt work.

So, you should be spending the least amount of time on developing and maintaining your data pipelines. The business needs the data asap, not wait for months at a time for a new data feed. Each extra hour you spend on the task is costing your organisation money. Automate the heck out of the process.

Data integration is where you can save the big $$

Do the maths. If the total cost of software and the savings on the developer effort make you better off (materially), make the move. If your integration effort step-changes for the better (e.g. by >70% with IOblend), if your dev time goes down from three months to two weeks, and if your number of devs required for the job halves – you should be asking for quotes asap.

The time and money you free up from the integration work will allow you to complete more projects faster. Your business will get value benefits from new insights and a welcome cost saving. A win-win.

If you are a data consultancy, you win more business by delivering projects faster, cheaper and with fewer resources by using automated integration tools. Especially in the current times of tough budget cuts.

Massively simplified data integration

We have developed IOblend after years of going through the same expensive motions of manually building and maintaining integration data pipelines. A never-ending cycle of financial pain, project delays and management frustration. We wanted to simplify, standardise and commoditise engineering effort to remove as much of that pain and cost as possible.

IOblend presents a transformative approach to data integration, enhancing the efficiency and reducing the complexity traditionally associated with such processes. We strongly believe in the “build fast, build well” principle.

One of the key strengths of IOblend is its ability to automate many aspects of data integration. This includes data lineage, error management, CDC, SCD, archiving, metadata management and more. All the functionality you need for a production-grade data integration pipeline with none of the time-sapping development complexity. The tool handles both real-time streaming and batch data in the same manner, making it extremely versatile and cost-effective.

IOblend represents a significant leap forward in data integration efficiency. Don’t believe me, try it for yourself. We have a FREE Developer Edition that has all the functionality, so you can experience the capabilities at your leisure.

Connect with us. Let’s chat and we can show you how you can get ahead of your development work and save a fortune in the process.

IOblend presents a ground-breaking approach to IoT and data integration, revolutionizing the way businesses handle their data. It’s an all-in-one data integration accelerator, boasting real-time, production-grade, managed Apache Spark™ data pipelines that can be set up in mere minutes. This facilitates a massive acceleration in data migration projects, whether from on-prem to cloud or between clouds, thanks to its low code/no code development and automated data management and governance.

IOblend also simplifies the integration of streaming and batch data through Kappa architecture, significantly boosting the efficiency of operational analytics and MLOps. Its system enables the robust and cost-effective delivery of both centralized and federated data architectures, with low latency and massively parallelized data processing, capable of handling over 10 million transactions per second. Additionally, IOblend integrates seamlessly with leading cloud services like Snowflake and Microsoft Azure, underscoring its versatility and broad applicability in various data environments.

At its core, IOblend is an end-to-end enterprise data integration solution built with DataOps capability. It stands out as a versatile ETL product for building and managing data estates with high-grade data flows. The platform powers operational analytics and AI initiatives, drastically reducing the costs and development efforts associated with data projects and data science ventures. It’s engineered to connect to any source, perform in-memory transformations of streaming and batch data, and direct the results to any destination with minimal effort.

IOblend’s use cases are diverse and impactful. It streams live data from factories to automated forecasting models and channels data from IoT sensors to real-time monitoring applications, enabling automated decision-making based on live inputs and historical statistics. Additionally, it handles the movement of production-grade streaming and batch data to and from cloud data warehouses and lakes, powers data exchanges, and feeds applications with data that adheres to complex business rules and governance policies.

The platform comprises two core components: the IOblend Designer and the IOblend Engine. The IOblend Designer is a desktop GUI used for designing, building, and testing data pipeline DAGs, producing metadata that describes the data pipelines. The IOblend Engine, the heart of the system, converts this metadata into Spark streaming jobs executed on any Spark cluster. Available in Developer and Enterprise suites, IOblend supports both local and remote engine operations, catering to a wide range of development and operational needs. It also facilitates collaborative development and pipeline versioning, making it a robust tool for modern data management and analytics

Continuous Data Replication for DR and Continuity

Continuous Data Replication: for Business Continuity and DR 📝 Did you know? According to industry studies, the average cost of IT downtime is approximately £4,500 per minute. For a large enterprise, a single hour of data loss or system unavailability can translate into millions in lost revenue, legal penalties, and irreparable brand damage. The Pulse of Availability Continuous

Smart Meter Data: Billing to Forecasting

Utilities: Smart Meter Data to Billing and Demand Forecasting 📋 Did You Know? The global roll-out of smart meters generates more data in a single day than most utility companies used to collect in an entire decade. While traditional meters were read once a month, or even once a quarter, smart meters transmit data at intervals

SCADA Streams to Reliability Analytics

Energy: SCADA Streams to Reliability Analytics 🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a

Building Live ETA Pipelines for Fleet Operations

Logistics: Live ETA Prediction Pipelines from Fleet + Orders 🚚 Did you know? The “Last Mile” is famously the most expensive and inefficient part of the supply chain, often accounting for up to 53% of total shipping costs. The Evolution of Real-Time Logistics Live ETA (Estimated Time of Arrival) prediction pipelines represent the shift from reactive

DB2 CDC to Lakehouse Without Re-Platforming

From DB2 to Lakehouse: Real-Time CDC Without Re-Platforming 💻 Did you know? Mainframe systems like DB2 still process approximately 30 billion business transactions every single day. Despite the rush toward modern cloud architectures, the world’s most critical financial and logistical data often resides in these “legacy” environments, making them the silent engines of the global economy.

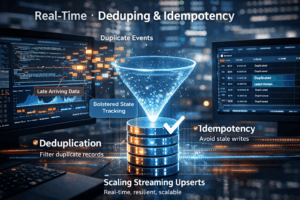

Real-Time Upserts: Deduping and Idempotency

Streaming Upserts Done Right: Deduping and Idempotency at Scale 💻 Did you know? In many high-velocity streaming environments, the “same” event can be sent or processed multiple times due to network retries or distributed system failures. The Art of the Upsert At its core, a streaming upsert (a portmanteau of “update” and “insert”) is the process of synchronising incoming data with an existing