Data Syncing: Automated Data Integration

Data Syncing, a crucial aspect of modern data management. It ensures data remains consistent and up-to-date across various sources, applications, and devices. This approach is increasingly significant due to the plethora of data sources and the demand for accurate data.

Properly implemented data synchronisation can lead to improvements in various business areas. Logistics, sales, order management, invoice accuracy, and reputation management are but a few. Synchronisation is crucial not just for operational efficiency but also for good data governance.S

What is Data Synchronisation?

Data syncing is all about maintaining consistency of data across connected systems/stores. As the name implies, it’s an ongoing process, which is equally applicable to new and historical data. Businesses use numerous software tools, dashboards, databases, etc. Synchronisation helps prevent databases from becoming disjointed and disorganised by keeping them in continuous communication.

Data syncing is essential for maintaining accuracy, consistency, and privacy in data management. Especially so when considering that even minor data errors can significantly impact decision-making. So, data syncing involves validation, cleaning and checking data for errors, duplication, and consistency before usage.

Data synchronisation benefits a wide range of stakeholders:

- Customers receive tailored product information and services.

- Business users can interact with updated information in real-time, globally.

- Executives make strategic decisions based on the latest data.

- Stockholders stay informed about their business interests.

- Manufacturers and distributors access up-to-date design, production, and marketing information.

Here’s one use case we worked on recently. This company had five separate systems containing (among other data) customer details. Data in each of them was used for different analytical purposes, likes sales trends, CRM, discounts and billing. If a customer moved house or changed name, only one or two systems would record the update, depending on where the changes to the customer details were picked up. They spent significant time reconciling discrepancies and generally only when surfaced (e.g. unpaid bills). Plenty of customer data was out of date going back years.

Data Synchronisation vs Data Integration

While often used interchangeably with data integration, synchronisation is a specific type of integration. The key difference is maintaining ongoing consistency between datasets. Integration means combining data in general (static and events) for regular and ad-hoc analytics. But synchronisation assumes continuous automated communication between systems/databases.

How Data Synchronisation is Achieved

There are several methods and technologies used to achieve this, including:

Batch Synchronisation: This involves periodically updating data at set intervals. It’s useful for non-time-sensitive data and can reduce the load on networks and systems.

Real-Time Synchronisation: This method keeps data in sync almost instantaneously. It is more complex and resource-intensive but is essential for applications that always require up-to-date information, such as financial trading platforms.

Bidirectional Synchronisation: In this approach, changes made in one database or system are replicated in the other, and vice versa. This is useful for systems where data input and updates occur at multiple points.

Conflict Resolution and Version Control: When data is edited concurrently in different locations, conflicts may arise. Resolving these conflicts requires rules or algorithms to determine which version of the data is kept. Software development uses version control systems for this purpose.

Change Data Capture (CDC): CDC involves identifying and capturing changes made to the data source and then applying these changes to the target data repository. This method is efficient as it only transfers changed data.

API-Based Synchronisation: Using APIs allows for the direct and seamless transfer of data between systems. This method is widely used for integrating web applications and services.

Database Replication: This involves creating copies of a database in different locations to ensure data availability and accessibility. This can be done in real-time or at scheduled intervals.

ETL Processes: This is a three-step process where data is extracted from a source, transformed into the required format, and then loaded into a target database or system.

Cloud-Based Synchronisation: Leveraging cloud services, data can be synced across multiple devices and platforms, providing accessibility and backup. Snowflake or MS Azure are often used here.

Middleware and Integration Platforms: These are software solutions that facilitate the connection and communication between different systems and databases, allowing for efficient data synchronisation.

The choice of synchronisation method depends on various factors like the nature of the data, frequency of updates, the need for real-time information, and the systems involved.

Challenges in Data Synchronisation

Organising business data involves navigating various systems e.g. CRM, employee portals, customer support, HRM, etc. The synchronisation process must address several challenges:

Security: Ensuring data moves through systems in compliance with industry-specific regulatory standards and privacy laws.

Data Quality: Regular updates and validation are required to maintain information integrity within a secure environment.

Data Management: Real-time management and integration are crucial for accuracy and to prevent errors.

Performance: The synchronisation process involves multiple phases, and any lapses can impact the end result.

Data Complexity: As data formats evolve with new vendors, customers, and technology, synchronisation must ensure consistency across systems.

It is still a big challenge for many organisations to apply data syncing effectively – and not only limited by the choice of technologies.

IOblend’s Approach to Data Syncing

IOblend is a very versatile tool, enabling a variety of approaches to data syncing. In our use case above, we helped the client sync their data across all five systems, using Salesforce as the “golden” record. An update in any of the systems triggers a chain of logical events (CDC, SCD, data quality checks, real-time validations, scheduled updates, bidirectional data mirroring, cloud and on-prem syncing, etc) that reconcile and update the customer information automatically.

Data syncing is a vital process in today’s analytics, where accurate data is more critical than ever for dynamic decision-making and operational efficiency. Proper data synchronisation demands careful planning, understanding what data there is, how the changes to it affect downstream analytics and services and what records take precedence. Tech is only a part of the overall puzzle and should not be the driving force behind the implementations.

Understand Your Data Well

Tools like IOblend are pushing the boundaries of what’s possible in data syncing, offering solutions that are fast, reliable, and suitable for a wide range of applications. However, if your business does not have a solid grasp on how the data is being used and managed, no tool will be of much help to you.

If you find this blog informative, we got lots more data topics here.

IOblend presents a ground-breaking approach to IoT and data integration, revolutionizing the way businesses handle their data. It’s an all-in-one data integration accelerator, boasting real-time, production-grade, managed Apache Spark™ data pipelines that can be set up in mere minutes. This facilitates a massive acceleration in data migration projects, whether from on-prem to cloud or between clouds, thanks to its low code/no code development and automated data management and governance.

IOblend also simplifies the integration of streaming and batch data through Kappa architecture, significantly boosting the efficiency of operational analytics and MLOps. Its system enables the robust and cost-effective delivery of both centralized and federated data architectures, with low latency and massively parallelized data processing, capable of handling over 10 million transactions per second. Additionally, IOblend integrates seamlessly with leading cloud services like Snowflake and Microsoft Azure, underscoring its versatility and broad applicability in various data environments.

At its core, IOblend is an end-to-end enterprise data integration solution built with DataOps capability. It stands out as a versatile ETL product for building and managing data estates with high-grade data flows. The platform powers operational analytics and AI initiatives, drastically reducing the costs and development efforts associated with data projects and data science ventures. It’s engineered to connect to any source, perform in-memory transformations of streaming and batch data, and direct the results to any destination with minimal effort.

IOblend’s use cases are diverse and impactful. It streams live data from factories to automated forecasting models and channels data from IoT sensors to real-time monitoring applications, enabling automated decision-making based on live inputs and historical statistics. Additionally, it handles the movement of production-grade streaming and batch data to and from cloud data warehouses and lakes, powers data exchanges, and feeds applications with data that adheres to complex business rules and governance policies.

The platform comprises two core components: the IOblend Designer and the IOblend Engine. The IOblend Designer is a desktop GUI used for designing, building, and testing data pipeline DAGs, producing metadata that describes the data pipelines. The IOblend Engine, the heart of the system, converts this metadata into Spark streaming jobs executed on any Spark cluster. Available in Developer and Enterprise suites, IOblend supports both local and remote engine operations, catering to a wide range of development and operational needs. It also facilitates collaborative development and pipeline versioning, making it a robust tool for modern data management and analytics

Continuous Data Replication for DR and Continuity

Continuous Data Replication: for Business Continuity and DR 📝 Did you know? According to industry studies, the average cost of IT downtime is approximately £4,500 per minute. For a large enterprise, a single hour of data loss or system unavailability can translate into millions in lost revenue, legal penalties, and irreparable brand damage. The Pulse of Availability Continuous

Smart Meter Data: Billing to Forecasting

Utilities: Smart Meter Data to Billing and Demand Forecasting 📋 Did You Know? The global roll-out of smart meters generates more data in a single day than most utility companies used to collect in an entire decade. While traditional meters were read once a month, or even once a quarter, smart meters transmit data at intervals

SCADA Streams to Reliability Analytics

Energy: SCADA Streams to Reliability Analytics 🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a

Building Live ETA Pipelines for Fleet Operations

Logistics: Live ETA Prediction Pipelines from Fleet + Orders 🚚 Did you know? The “Last Mile” is famously the most expensive and inefficient part of the supply chain, often accounting for up to 53% of total shipping costs. The Evolution of Real-Time Logistics Live ETA (Estimated Time of Arrival) prediction pipelines represent the shift from reactive

DB2 CDC to Lakehouse Without Re-Platforming

From DB2 to Lakehouse: Real-Time CDC Without Re-Platforming 💻 Did you know? Mainframe systems like DB2 still process approximately 30 billion business transactions every single day. Despite the rush toward modern cloud architectures, the world’s most critical financial and logistical data often resides in these “legacy” environments, making them the silent engines of the global economy.

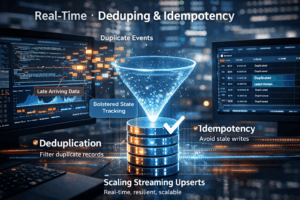

Real-Time Upserts: Deduping and Idempotency

Streaming Upserts Done Right: Deduping and Idempotency at Scale 💻 Did you know? In many high-velocity streaming environments, the “same” event can be sent or processed multiple times due to network retries or distributed system failures. The Art of the Upsert At its core, a streaming upsert (a portmanteau of “update” and “insert”) is the process of synchronising incoming data with an existing