Rethinking the Feature Store concept for MLOps

Today we talk about Feature Stores. The recent Databricks acquisition of Tecton raised an interesting question for us: can we make a feature store work with any infra just as easily as a dedicated system using IOblend? Let’s have a look.

How a Feature Store Works Today

Machine learning teams agree on one thing: features are the most important input to a model. They need to be fresh, consistent, based on quality data and governed — across both training and inference.

This is the problem “feature stores” were invented to solve. By standardising feature definitions, providing a registry, and separating offline training data from online serving, they’ve become a common pattern in ML infrastructure.

But there’s a catch. Most feature stores introduce a new layer of infrastructure:

- A dedicated online store (often Redis, Dynamo, Tecton or Cassandra).

- An API service for serving features in real-time.

- A registry and metadata service.

- Synchronisation pipelines to keep online and offline stores consistent.

This works — but it comes at a cost. Teams end up paying for additional cloud services (platform costs + compute), debugging consistency issues between “the store” and “the warehouse,” and managing ingest tools to bring data into the said platforms. In some cases, the effort to run the feature store rivals the effort to run the models themselves.

So the question is worth asking: do we really need a separate store?

Rethinking the Pattern

If we step back, the essentials of a feature store boil down to five capabilities:

- Definitions — how features are built.

- A registry — metadata, lineage, and versioning.

- Offline storage — historical features for training.

- Online access — low-latency serving for inference.

- Freshness and correctness — keeping features aligned across training and serving.

Nothing on that list says you must use a secondary storage system. What you really need is a way to:

- Continuously process raw data into features.

- Materialise those features into your existing lake or warehouse.

- Guarantee governance, lineage, and point-in-time correctness.

- Serve them with the right latency for your application.

That raises an interesting alternative: what if the warehouse itself could be the feature store?

Continuous Features Without Extra Infrastructure

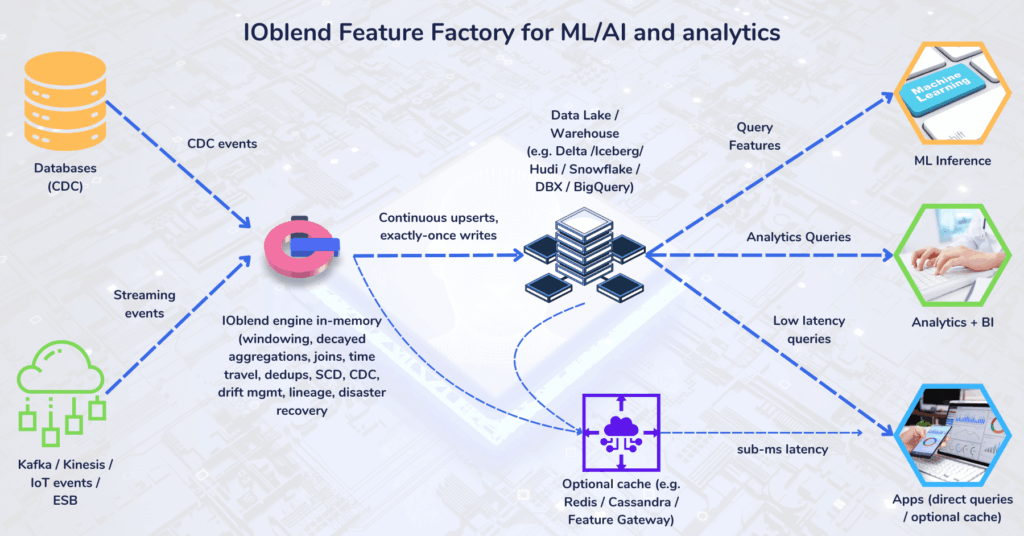

This is where IOblend’s design comes in. Built as a streaming-first DataOps engine (rather than a batch system adapted for streaming), it already provides the primitives that feature stores aim to deliver — but without standing up a new stack.

- Sub-second freshness — pipelines run continuously, not in micro-batches.

- Network-bound P99 latency — feature updates propagate almost instantly.

- >1 million TPS sustained — proven in production deployments.

- Governance by default — lineage, CDC handling, schema evolution, SCD, and drift monitoring are built into every pipeline.

- Runs in your environment — on-prem, VPC, or edge. No SaaS dependencies in the inference path.

In this architecture, the warehouse becomes the store. IOblend continuously maintains features inside Delta, Iceberg, Hudi, Snowflake, BigQuery, and others — the systems you already run.

The ROI of “No Store”

By eliminating the need for a separate feature store tier, you reap see benefits across several dimensions:

- Infrastructure cost savings — no Redis/Dynamo clusters, no duplication of data between “online” and “offline” stores.

- Performance at scale — P99 inference latencies and million-TPS throughput without extra serving infrastructure.

- Operational simplicity — ingestion, transformation, governance, and materialisation all in one engine.

- Data security and compliance — features stay inside your environment; no regulated data leaves your boundary.

A Dataflow in Practice

- Ingest — CDC from operational databases and event streams (Kafka, Kinesis, IoT).

- Process — streaming pipelines apply joins, aggregations, deduplication, slowly changing dimensions, and CDC merges with exactly-once semantics.

- Materialise — features continuously upserted into warehouse/lake tables (Delta, Iceberg, Hudi, Snowflake, BigQuery, etc.).

- Consume — applications query those tables directly. For ultra-low latency (<5ms), a lightweight cache or gateway can be layered on top.

Something to Think About

The idea behind feature stores is sound: models need features that are fresh, correct, and consistent. But the conventional implementation — running a parallel online store — is not the only way to achieve this.

A streaming-first engine that maintains features directly in your warehouse can deliver the same guarantees — with fewer systems, lower costs, and proven production performance at scale.

That’s the approach we’ve taken with IOblend: a feature store without the store.

IOblend presents a ground-breaking approach to IoT and data integration, revolutionizing the way businesses handle their data. It’s an all-in-one data integration accelerator, boasting real-time, production-grade, managed Apache Spark™ data pipelines that can be set up in mere minutes. This facilitates a massive acceleration in data migration projects, whether from on-prem to cloud or between clouds, thanks to its low code/no code development and automated data management and governance.

IOblend also simplifies the integration of streaming and batch data through Kappa architecture, significantly boosting the efficiency of operational analytics and MLOps. Its system enables the robust and cost-effective delivery of both centralized and federated data architectures, with low latency and massively parallelized data processing, capable of handling over 10 million transactions per second. Additionally, IOblend integrates seamlessly with leading cloud services like Snowflake and Microsoft Azure, underscoring its versatility and broad applicability in various data environments.

At its core, IOblend is an end-to-end enterprise data integration solution built with DataOps capability. It stands out as a versatile ETL product for building and managing data estates with high-grade data flows. The platform powers operational analytics and AI initiatives, drastically reducing the costs and development efforts associated with data projects and data science ventures. It’s engineered to connect to any source, perform in-memory transformations of streaming and batch data, and direct the results to any destination with minimal effort.

IOblend’s use cases are diverse and impactful. It streams live data from factories to automated forecasting models and channels data from IoT sensors to real-time monitoring applications, enabling automated decision-making based on live inputs and historical statistics. Additionally, it handles the movement of production-grade streaming and batch data to and from cloud data warehouses and lakes, powers data exchanges, and feeds applications with data that adheres to complex business rules and governance policies.

The platform comprises two core components: the IOblend Designer and the IOblend Engine. The IOblend Designer is a desktop GUI used for designing, building, and testing data pipeline DAGs, producing metadata that describes the data pipelines. The IOblend Engine, the heart of the system, converts this metadata into Spark streaming jobs executed on any Spark cluster. Available in Developer and Enterprise suites, IOblend supports both local and remote engine operations, catering to a wide range of development and operational needs. It also facilitates collaborative development and pipeline versioning, making it a robust tool for modern data management and analytics

Preventing Data Drift in Modern Data Systems

The Invisible Erosion: Detecting and Managing Data Drift in Modern Architectures 📊 Did you know? According to recent industry surveys, over 70% of organisations experience significant data drift within the first six months of deploying a production system. The Concept of Data Drift Data drift occurs when the statistical properties or the underlying structure of incoming data change

Stream Database Changes to Your Lakehouse with CDC

Zero-Lag Operations: Stream Database Changes to Your Lakehouse 💾 Did you know? The “data downtime” caused by traditional batch processing costs the average enterprise approximately £12,000 per minute. The Concept: Moving at the Speed of Change Zero-lag operations rely on a transition from periodic “snapshots” to continuous “streams.” Instead of moving massive blocks of data at

Real-Time Salesforce CDC to Snowflake

Real-Time CDC: Keep Salesforce and Snowflake in Perfect Sync 🔎 Did you know? While many businesses still rely on nightly batch windows to move CRM data, Salesforce generates millions of events every hour. The Concept: Real-Time CDC Real-Time Change Data Capture (CDC) is a software design pattern used to determine and track data that has

Build Production Spark Pipelines—No Scala Needed

Democratising Spark: How IOblend enables Data Analysts to build production-grade Spark pipelines without writing Scala or Java Did You Know? The average enterprise now manages over 350 different data sources, yet nearly 70% of data leaders report feeling “trapped” by their own infrastructure. The Concept: Democratising the Spark Engine At its core, Apache Spark is a lightning-fast, distributed computing

IOblend vs Vendor Lock-In: Portable JSON + Python + SQL

The End of Vendor Lock-in: Keeping your logic portable with IOblend’s JSON-based playbooks and Python/SQL 💾 Did you know? The average enterprise now uses over 350 different data sources, yet nearly 70% of data leaders feel “trapped” by their infrastructure. Recent industry reports suggest that migrating a legacy data warehouse to a new provider can

IOblend JSON Playbooks: Keep Logic Portable, No Lock-In

The End of Vendor Lock-in: Keeping your logic portable with IOblend’s JSON-based playbooks and Python/SQL core 💾 Did you know? The average enterprise now uses over 350 different data sources, yet nearly 70% of data leaders feel “trapped” by their infrastructure. Recent industry reports suggest that migrating a legacy data warehouse to a new provider can