Less cost, more value

Businesses today operate with an extensive array of apps, databases, and cloud services. Data is scattered data across various locations, formats, and timelines. This data needs to be integrated and analysed to make any use of it. But it’s not quite straightforward, unfortunately. And it gets expensive in various ways.

Some of the data is decades old, perhaps even stored on paper, making the task of processing it daunting. Some data resides in data silos, only used by a single team. Other data flows from, say, PoS systems in real-time and needs processing on-the-fly. Then you got data scientists building ML models that require quality data from multiple systems.

You got all sorts of operational systems scattered about the company, such as ERP, CRM, revenue management, safety systems, finance, CMS, etc. The data needs to be managed within each system. You then have data warehouses where you store the data from those systems and the rest of the business. And, off course, there is a proliferation of Excel models. If you want to make use of it, you need to bring that data together for valuable insights, right? That’s when it stings you.

No company is immune from the pains of data integration. It is one of the top IT cost items. So, to become (or stay) efficient and reduce data spend, companies must get on top of their integration effort. Else your data costs will only ever go up and your data projects will lag behind.

Evaluate and prioritise projects

We tend to measure benefits in the data world with ROI. It makes it easier to determine the value of a data initiative and compare different ones side by side. By evaluating the potential ROI of data integration projects, businesses can prioritise those that offer the greatest value. This approach ensures that resources are allocated efficiently, avoiding overinvestment in low-impact projects.

I have seen annual project pipelines of a hundred fifty plus. Constantly refreshed. Supervisory committees meeting monthly to shuffle the deck. Only the top ten-fifteen at best get serious attention. Others are either parked or handled on an “as and when” basis. Everyone wants something built for their department or team. But your business has finite resources. So, prioritisation becomes key.

Simplify your data architecture

Data architecture is a blueprint for managing data assets. It includes data models, policies, rules, and standards that govern which data is collected, how it is stored, how it is accessed, and how it is used. It covers databases, data warehouses, data lakes, and the integration and interaction between these components.

Always opt for a simplified and standardised data architecture to reduce complexity and cost. Make it easier for the data team to manage data flows and integration processes. Reduce the labour required for data management and troubleshooting. The fewer components in your data architecture you have to monitor and maintain, the less your expense will be.

Standardise and simplify data formats

In most companies, data formats are all over the place. Standardising data formats across your organisation will drastically reduce the complexity and time required for data integration projects. It can also help minimise errors and improve data quality, reducing the need for manual interventions.

Many companies use a wide range of data formats spread across all kinds of systems. They then build catalogues, mapping layers and validation processes in an attempt to bring it into a coherent state. What complicates matters even more is that the data can vary (sometimes considerably) even within a predetermined format.

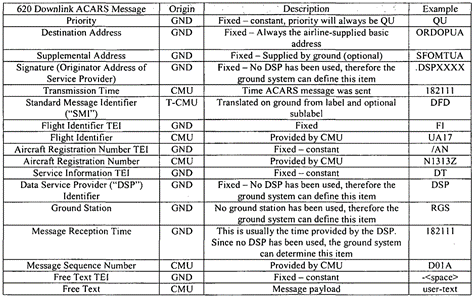

For example, in aviation, simple, automated message exchanges between the aircraft and ground use a common standard (ACARS). But these messages are normally customised by the operators. When you ingest and process these messages for analysis, expecting them to be uniform, you got another thing coming. The cost impact manifests in delayed processing, data quality issues and extra analyst hours.

Focus on data quality at the source

This leads us to the next aspect of the reducing data integration costs. Implementing strong data governance and quality controls at the point of data entry minimises the need for extensive data cleaning and preparation downstream. Addressing data quality upstream in an automated way reduces the manual labour hours needed for data integration tasks.

Ensuring high data quality at the point of entry reduces the need for costly data cleaning and transformation later. This can be achieved through data contracts, validation rules, quality audits, and training on data entry standards (when manual). The less effort you spend to clean your data manually downstream, the more ££ you save on your data integration.

Automate data integration processes

75% of data analytics effort is data integration. That’s your big cost item (engineering time is not cheap). It tends to be manual and clunky. You want to minimise the laborious effort as much as possible. And you achieve that though automation, naturally. Data pipelines should not be fragile like glass that require careful handling with baby gloves. They should be robust, resilient, and standardised. They must require as little human supervision as possible.

Automation will reduce the time and labour costs associated with manual data integration. Instead of constantly faffing about with data issues, they get sorted in the background, automatically. As a result, you will get the data faster, more reliably, with better quality and reduced integration OpEx.

At IOblend, we turn data integration into an automation art form. Just saying.

Consolidate data integration tools

There is no shortage of data integration tools out there (IOblend is one). Old and new, they address all sorts of use cases. Companies will often have multiple technologies (off-the-shelf and custom-built using OSS). Someone must learn and manage them all, which is an extra cost burden.

Consolidate your data integration tools and platforms. If one tool does the job of three, drop the others. No matter how personally you may be attached to them. You will reduce licensing fees, training costs, and the overhead associated with managing multiple systems. This approach also simplifies data governance and security management.

Use Low-Code/No-Code tools and platforms

These tools can enable non-technical users to participate in the data integration process, reducing the burden on IT staff. Using low-code/no-code tools really opens up the data integration playground.

Folks who don’t speak fluent tech can get stuck into the data mix. You can get your business experts to use data in their jobs more easily. They can extract, process and analyse their data faster and more efficiently. They will no longer need to raise request tickets or bribe engineers with coffee to get the data they need.

By empowering the business to build and manage data integrations themselves, you’re reducing the burden on data engineers. You will be freeing them up to do more value-add projects.

What is also great is that you will be making the entire organisation better engaged with the data. As the SMEs engage with data directly, they will be more open to experiment and adopt new insights quicker. The trust in the potential of the data will be much higher. The ROI of your data initiatives will also increase.

Collaboration and sharing across departments

Promoting collaboration and sharing across departments fosters a culture of innovation and efficiency. Simple-to-use and robust data integration tools make it easier to achieve better collaboration. Say, your sales team need to know they have the new product in stock (inventory and logistics states) before they go all out with it. They can get the data quickly either themselves or from the relevant teams (easy integration pipelines). No delays and complex dev processes to follow. They can see what the stock levels are and what the delivery profile looks like. This information allows the sales team to better tailor their campaign and ensure higher levels of customer satisfaction.

The adoption of shared data platforms and tools is crucial in achieving this level of collaboration. Once the sale team have done the data integration for their campaign, they can easily re-use the integration pipeline again. Or quickly customise it for another use case, without needing to start from scratch. You save time and money on development and management. Shared libraries and reusable components simplify the process of data sharing and utilisation across different departments.

The premise here is simple yet powerful: the data necessary for a new project or analysis has likely been used before within the organisation. Leveraging this pre-existing knowledge and data easily can dramatically accelerate project timelines and enhance overall decision-making processes.

Skill development and training

No, obviously, you cannot just make SMEs proficient with data overnight. But you definitely should invest in making them so. Training existing staff on data integration techniques and tools can be more cost-effective than hiring new data specialists. A skilled workforce can leverage existing resources more efficiently. They are engaged, they are close to the “frontlines”. There isn’t anyone who understands the business better. All you need to do is empower them with the right tech to do what they do best, better.

Just don’t make the mistake of going down the path of full technical training. That is a waste of everyone’s time and your money. Your business specialists have not gone into tech for a reason. They have a different passion. They are awesome at what they do. And they want to do it better. Everyone wants to do their job more efficiently, with less hassle and stress. They would welcome tools that make their lives easier. You just need to make it easy.

Find a tech stack balance for your particular organisation and tailor the upskilling to suit your staff’s capabilities. You will be amazed to see the engagement and productivity shoot through the roof.

Monitor and optimise performance

Data integration is not static. New requirements, innovative technologies and data integration approaches pop up all the time. Regularly monitoring existing data integration processes for performance bottlenecks and optimising them can reduce costs over time. This can involve tuning databases, optimising data models, and improving integration workflows. Or even changing the tech stack if the old one no longer makes financial sense.

Review and adjust your data integration processes and resource allocation based on current needs and priorities. Use agile methodologies to manage data integration projects, focusing on delivering value incrementally and adjusting quickly to changing requirements.

IOblend can help

As you can see, integration is a key driver of your IT cost. It’s not simple to reduce it and it takes a careful implementation to succeed. Yet, this is area delivers the biggest bang for your data buck.

Every business has different requirements. Different sets of constraints. Complexities. We understand that. And we can help you navigate through those.

Get in touch to discuss your data integration challenges. We can save you a considerable amount of money and effort.

IOblend presents a ground-breaking approach to IoT and data integration, revolutionizing the way businesses handle their data. It’s an all-in-one data integration accelerator, boasting real-time, production-grade, managed Apache Spark™ data pipelines that can be set up in mere minutes. This facilitates a massive acceleration in data migration projects, whether from on-prem to cloud or between clouds, thanks to its low code/no code development and automated data management and governance.

IOblend also simplifies the integration of streaming and batch data through Kappa architecture, significantly boosting the efficiency of operational analytics and MLOps. Its system enables the robust and cost-effective delivery of both centralized and federated data architectures, with low latency and massively parallelized data processing, capable of handling over 10 million transactions per second. Additionally, IOblend integrates seamlessly with leading cloud services like Snowflake and Microsoft Azure, underscoring its versatility and broad applicability in various data environments.

At its core, IOblend is an end-to-end enterprise data integration solution built with DataOps capability. It stands out as a versatile ETL product for building and managing data estates with high-grade data flows. The platform powers operational analytics and AI initiatives, drastically reducing the costs and development efforts associated with data projects and data science ventures. It’s engineered to connect to any source, perform in-memory transformations of streaming and batch data, and direct the results to any destination with minimal effort.

IOblend’s use cases are diverse and impactful. It streams live data from factories to automated forecasting models and channels data from IoT sensors to real-time monitoring applications, enabling automated decision-making based on live inputs and historical statistics. Additionally, it handles the movement of production-grade streaming and batch data to and from cloud data warehouses and lakes, powers data exchanges, and feeds applications with data that adheres to complex business rules and governance policies.

The platform comprises two core components: the IOblend Designer and the IOblend Engine. The IOblend Designer is a desktop GUI used for designing, building, and testing data pipeline DAGs, producing metadata that describes the data pipelines. The IOblend Engine, the heart of the system, converts this metadata into Spark streaming jobs executed on any Spark cluster. Available in Developer and Enterprise suites, IOblend supports both local and remote engine operations, catering to a wide range of development and operational needs. It also facilitates collaborative development and pipeline versioning, making it a robust tool for modern data management and analytics

Schema Drift: The Silent Killer of Data Pipelines

The Silent Pipeline Killer: Surviving Schema Drift in the Wild 📊 Did you know? In the early days of big data, a single column change in a source database could trigger a “data graveyard” effect, where downstream analytics remained broken for weeks. The silent pipeline killer Schema drift occurs when the structure of source data changes unexpectedly. Imagine

Preventing Data Drift in Modern Data Systems

The Invisible Erosion: Detecting and Managing Data Drift in Modern Architectures 📊 Did you know? According to recent industry surveys, over 70% of organisations experience significant data drift within the first six months of deploying a production system. The Concept of Data Drift Data drift occurs when the statistical properties or the underlying structure of incoming data change

Stream Database Changes to Your Lakehouse with CDC

Zero-Lag Operations: Stream Database Changes to Your Lakehouse 💾 Did you know? The “data downtime” caused by traditional batch processing costs the average enterprise approximately £12,000 per minute. The Concept: Moving at the Speed of Change Zero-lag operations rely on a transition from periodic “snapshots” to continuous “streams.” Instead of moving massive blocks of data at

Real-Time Salesforce CDC to Snowflake

Real-Time CDC: Keep Salesforce and Snowflake in Perfect Sync 🔎 Did you know? While many businesses still rely on nightly batch windows to move CRM data, Salesforce generates millions of events every hour. The Concept: Real-Time CDC Real-Time Change Data Capture (CDC) is a software design pattern used to determine and track data that has

Build Production Spark Pipelines—No Scala Needed

Democratising Spark: How IOblend enables Data Analysts to build production-grade Spark pipelines without writing Scala or Java Did You Know? The average enterprise now manages over 350 different data sources, yet nearly 70% of data leaders report feeling “trapped” by their own infrastructure. The Concept: Democratising the Spark Engine At its core, Apache Spark is a lightning-fast, distributed computing

IOblend vs Vendor Lock-In: Portable JSON + Python + SQL

The End of Vendor Lock-in: Keeping your logic portable with IOblend’s JSON-based playbooks and Python/SQL 💾 Did you know? The average enterprise now uses over 350 different data sources, yet nearly 70% of data leaders feel “trapped” by their infrastructure. Recent industry reports suggest that migrating a legacy data warehouse to a new provider can