Agentic AI ETL: The Future of Data Integration

📓 Did you know? By 2025, the volume of data generated globally is projected to reach 175 zettabytes? That’s a truly enormous number, highlighting the ever-increasing importance of efficient data management.

What is Agentic AI ETL?

Agentic AI ETL represents a transformative evolution in data integration. Traditional ETL (Extract, Transform, Load) processes often involve manual configurations and rigid workflows. In contrast, Agentic AI ETL leverages autonomous AI agents capable of making decisions, adapting to new data structures, and orchestrating complex data workflows with minimal human intervention.

These intelligent agents can:

- Extract data from diverse sources, including unstructured formats like PDFs, emails, and text messages.

- Transform data by understanding context, applying business logic, and ensuring data quality.

- Load the refined data into target systems efficiently.

- By embedding AI agents directly into the ETL pipeline, organisations can achieve real-time data processing, enhanced accuracy, and greater scalability.

Why Embrace Agentic AI ETL?

Adopting Agentic AI ETL offers several compelling advantages:

- Automation: Reduces the need for manual coding and intervention, allowing teams to focus on strategic tasks.

- Speed: Accelerates data integration processes, enabling quicker access to actionable insights.

- Quality: Enhances data reliability through continuous validation and error correction mechanisms.

- Adaptability: AI agents can learn from new data patterns and adjust processes accordingly, ensuring resilience in dynamic environments.

Implementing Agentic AI ETL with IOblend

IOblend stands at the forefront of Agentic AI ETL solutions, offering a platform that seamlessly integrates AI agents into data pipelines. Here’s how IOblend facilitates this:

Embedded AI Agents: IOblend allows the incorporation of AI agents within ETL workflows, enabling the processing of unstructured data sources. These agents can extract relevant information, validate it, and integrate it with structured data in real-time.

Real-Time Processing: Utilising Change Data Capture (CDC) mechanisms, IOblend ensures that data pipelines are responsive to changes, maintaining up-to-date information across systems.

Low-Code Development: With support for Python scripting and a user-friendly interface, IOblend reduces development time and complexity, making it accessible for teams with varying technical expertise.

Scalability and Flexibility: IOblend’s architecture supports deployment across various environments, including on-premises, cloud, and hybrid setups, catering to diverse organisational needs.

Fast Forward to the Future with IOblend

Embracing IOblend’s Agentic AI ETL capabilities positions organizations to harness the full potential of their data assets. By automating complex data processes and enabling intelligent decision-making within data pipelines, businesses can achieve enhanced efficiency, accuracy, and agility.

IOblend: See more. Do more. Deliver better.

If you have specific use cases or data integration challenges in mind, feel free to share them, and we can provide more tailored insights on how Agentic AI ETL and IOblend can address your needs.

IOblend presents a ground-breaking approach to IoT and data integration, revolutionizing the way businesses handle their data. It’s an all-in-one data integration accelerator, boasting real-time, production-grade, managed Apache Spark™ data pipelines that can be set up in mere minutes. This facilitates a massive acceleration in data migration projects, whether from on-prem to cloud or between clouds, thanks to its low code/no code development and automated data management and governance.

IOblend also simplifies the integration of streaming and batch data through Kappa architecture, significantly boosting the efficiency of operational analytics and MLOps. Its system enables the robust and cost-effective delivery of both centralized and federated data architectures, with low latency and massively parallelized data processing, capable of handling over 10 million transactions per second. Additionally, IOblend integrates seamlessly with leading cloud services like Snowflake and Microsoft Azure, underscoring its versatility and broad applicability in various data environments.

At its core, IOblend is an end-to-end enterprise data integration solution built with DataOps capability. It stands out as a versatile ETL product for building and managing data estates with high-grade data flows. The platform powers operational analytics and AI initiatives, drastically reducing the costs and development efforts associated with data projects and data science ventures. It’s engineered to connect to any source, perform in-memory transformations of streaming and batch data, and direct the results to any destination with minimal effort.

IOblend’s use cases are diverse and impactful. It streams live data from factories to automated forecasting models and channels data from IoT sensors to real-time monitoring applications, enabling automated decision-making based on live inputs and historical statistics. Additionally, it handles the movement of production-grade streaming and batch data to and from cloud data warehouses and lakes, powers data exchanges, and feeds applications with data that adheres to complex business rules and governance policies.

The platform comprises two core components: the IOblend Designer and the IOblend Engine. The IOblend Designer is a desktop GUI used for designing, building, and testing data pipeline DAGs, producing metadata that describes the data pipelines. The IOblend Engine, the heart of the system, converts this metadata into Spark streaming jobs executed on any Spark cluster. Available in Developer and Enterprise suites, IOblend supports both local and remote engine operations, catering to a wide range of development and operational needs. It also facilitates collaborative development and pipeline versioning, making it a robust tool for modern data management and analytics

Continuous Data Replication for DR and Continuity

Continuous Data Replication: for Business Continuity and DR 📝 Did you know? According to industry studies, the average cost of IT downtime is approximately £4,500 per minute. For a large enterprise, a single hour of data loss or system unavailability can translate into millions in lost revenue, legal penalties, and irreparable brand damage. The Pulse of

Smart Meter Data: Billing to Forecasting

Utilities: Smart Meter Data to Billing and Demand Forecasting 📋 Did You Know? The global roll-out of smart meters generates more data in a single day than most utility companies used to collect in an entire decade. While traditional meters were read once a month, or even once a quarter, smart meters transmit data at intervals

SCADA Streams to Reliability Analytics

Energy: SCADA Streams to Reliability Analytics 🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a

Building Live ETA Pipelines for Fleet Operations

Logistics: Live ETA Prediction Pipelines from Fleet + Orders 🚚 Did you know? The “Last Mile” is famously the most expensive and inefficient part of the supply chain, often accounting for up to 53% of total shipping costs. The Evolution of Real-Time Logistics Live ETA (Estimated Time of Arrival) prediction pipelines represent the shift from reactive

DB2 CDC to Lakehouse Without Re-Platforming

From DB2 to Lakehouse: Real-Time CDC Without Re-Platforming 💻 Did you know? Mainframe systems like DB2 still process approximately 30 billion business transactions every single day. Despite the rush toward modern cloud architectures, the world’s most critical financial and logistical data often resides in these “legacy” environments, making them the silent engines of the global economy.

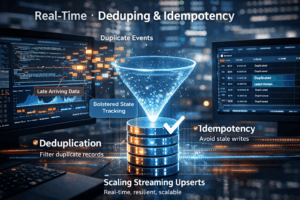

Real-Time Upserts: Deduping and Idempotency

Streaming Upserts Done Right: Deduping and Idempotency at Scale 💻 Did you know? In many high-velocity streaming environments, the “same” event can be sent or processed multiple times due to network retries or distributed system failures. The Art of the Upsert At its core, a streaming upsert (a portmanteau of “update” and “insert”) is the process of synchronising incoming data with an existing