I think most practitioners in the data world would agree that the core data mesh principles of decentralisation to improve data enablement are sound. Originally penned by Zhamak Dehghani, Data Mesh architecture is attracting a lot of attention, and rightly so.

However, there is a growing concern in the data industry regarding how the data mesh defines decentralisation. In particular, the ‘scope of decentralisation’, as this determines success of an enterprise data platform using the concept of a data mesh.

In this article I’ll be delving into what successful decentralisation for a data platform looks like and explain why complete decentralisation via a data mesh can cause more problems than it solves.

Data decentralization is not a new paradigm. Many of us have been building data platforms with this theme for many years now, supported by architecture frameworks such as data fabrics, data vaults, data hubs, data grids, data virtualisation, etc. To be clear, these architectural frameworks do not deliver decentralised data platforms on their own but rather facilitate them when supported by the correct processes and technology (I will delve into more detail on this later!)

Decentralisation (prior to the advent of the term ‘data mesh’) was driven to a great extent by the disappearance of the ‘colossal IT budget’ and the emergence of business domains being costed for their data projects. This drove mature data organisations to explore and start to invest in the following features of a distributed/decentralised data platform – let’s call it WADP for now (well architected data platform):

Data platform infrastructure as a service, with storage, cpu, memory and services being costed at sub-accounts levels mapped to business domains.

The adoption of agile resourcing delivery models, using constructs such as tribes and squads to federate resources across business domains in order to deliver business data functionality.

DDA (data design authorities) and or TDA (technical design authority) being run by business data owners and technical owners: to define common architectural, delivery, management (people, process and technology) and governance, all to used as a guide for delivering business data functionality.

- Domain resources being embedded into data projects to promote business knowledge and improve success.

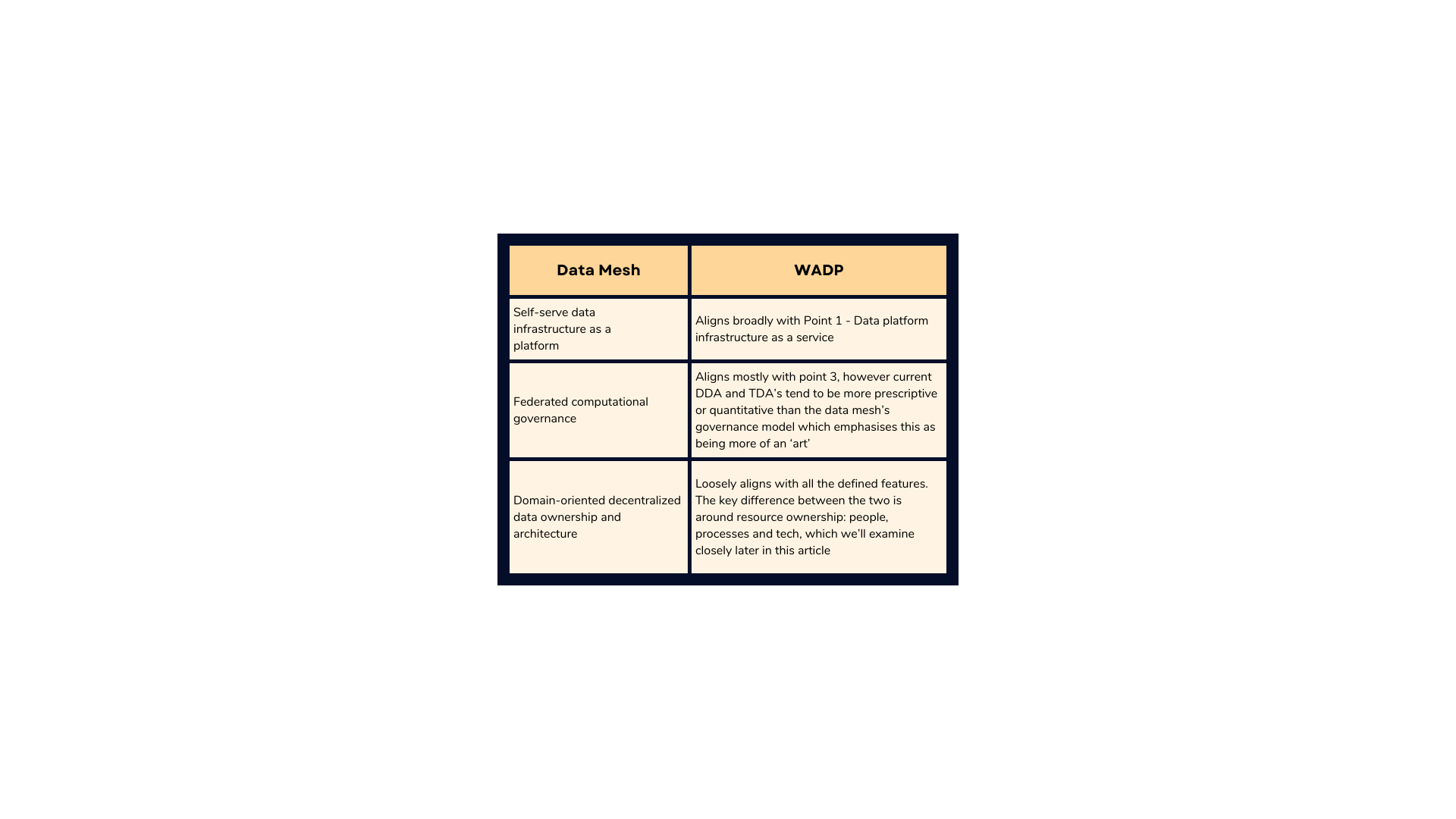

The above features look very similar to some of the core principles of a data mesh, as seen in the alignment map below.

Great, so we’ve defined some similarities between how WADP were built prior to the advent of the ‘data mesh’ term and data mesh principles.

Despite this alignment, there are some deep rooted differences, which I’ll get into below. But first, we just need to recap on what the data mesh and similar frameworks are trying to overcome.

What heavily contributed to the disappearance of the ‘colossal IT budget’ was IT’s inability to deliver the business data analytic needs to time and budget – and sometimes at all! Inability to deliver was/is predominantly a function of the following issues:

1. Bogged down with process: architectural, development and project management

Using heavy ‘waterfall’ like processes for design, management and development of data products. Even in more ‘agile’ driven organisations, they’re still slowed by heavy design and management process: i.e. dedicated architectural departments can sometimes impede the rate of development by insisting on the creation of an architectural pattern for everything. As an architect, I understand that pattern definition has its place but it shouldn’t stifle a data squad from implementing something new, without having to define a new pattern (or an anti or edge pattern to an existing pattern) that needs to conform to enterprise architectural documentation templates and approval process! Let’s validate, build and see if it’s reused – then define a pattern.

2. Lack of skilled data engineers

Irrespective of whether you’re building a data lake, data warehouse, data marts, all three or a hybrid approach (think lakehouse – best of all worlds), you need skilled engineers that can write data pipelines that will extract (E), transform (T) and load (L) (irrespective of the ordering of the E, T and L) to generate your target output. Irrespective of whether that’s persisted, passed onto another process or just resides in memory as part of your data virtualisation. Throw into the mix data federated across different cloud and on-prem platforms, the use of multiple after-the-fact toolsets to enforce data quality, master data management, data governance (especially around regulations like the right to be forgotten to name but a few), means you need very skilled engineers – which are hard to find and hence also costly. Now mix kappa architecture (real time and batch data pipelines being served by one pipeline rather than two) into this and your data engineers become even more akin to gold dust!

3. Lack of re-use

Every project seemed to follow the mantra of ‘we can do it better’, which meant little reuse of existing data assets, be that the data itself or the process that generated and governed it. This was also exacerbated by the lack of an enterprise metadata repository facilitating such reuse.

Note: the above list is not exhaustive but highlights some of the key failure points

The above describes what bad looks like, therefore for any data product to succeed – irrespective of who is delivering it (a business domain or IT department) – these points need to be addressed in any design and implementation model that also promotes domain ownership.

When we examine these fail points against the data mesh’s core principles, we start to see some clear ‘Gotchas’ with this design paradigm. If not addressed, they will cause an enterprise data initiative to ultimately fail.

Let’s have a look at these Gotchas and potential solutions.

Data Mesh Gotcha 1: lack of skilled data engineering resources is still an issue

The data mesh acknowledges fail point 2 above – ‘lack of skilled resources’ – and stipulates that it is a driving factor for decentralisation. It states the need for highly skilled data engineers and the effort to maintain what it calls a ‘coupled pipeline’ is necessitated by the fact that a centralised data platform is being used. This is incorrect and thus highlights our first Gotcha.

The data mesh advocates ‘distributed pipelines as domain internal implementation’. This means the ETL that used to be conducted in the centralised platform for the domain specific data, is now done by the domain in their own node of the mesh. In other words, in a data ecosystem specific to the domain. Therefore, the data mesh framework removes the responsibility for the creation and management of the domain’s data from a centralised IT team to a domain IT team.

This doesn’t reduce the complexity or number of data pipelines (assuming no redundancy in the centralised system around data pipeline functional duplication). Neither does it reduce the demand from the rest of the business for its data. Therefore, it doesn’t reduce the need for highly skilled resources.

The issue of a lack of skilled data engineers still remains, which continues to cause the project backlog seen in fully centralised data platforms. All it does is move the problem down to the business domain.

Data Mesh Gotcha 2: data democratisation is not achieved

Gotcha 1, exposes Gotcha 2. There is an argument that the data mesh framework actually exacerbates the effects of the lack of skilled engineers by increasing the required data engineering effort to make the data mesh work.

Nodes on a data mesh are connected by domain engineering teams using APIs. This means if a data user wishes to munge data from two other data domains into their domain, their engineering team has to be engaged to write a data pipeline that pulls the data from the other domains’ APIs. It then needs to integrate it into the local domain in the manner the data user wanted.

This approach once again creates an engineering backlog, when really what we should be doing is offering data democratisation – providing the data user the capability to combine the data from the three domains themselves, without having to know any of the technicalities around using APIs and/or writing code to build the data pipeline.

Data Mesh Gotcha 3: fragmented knowledge and skill sets

Localising data engineers to a domain goes against the grain of resourcing concepts like tribes and squads, which promote collegiate, learning and best practice amongst your data engineers.

By isolating and restricting data engineers to a domain, you restrict their capability to grow technically by learning/using the platforms and tech that other domains may be using (as encouraged by a federated and decentralised data platform).

This also stifles your data engineer’s ability to progress from a career perspective. The only career progression available is within their domain because of the specific engineer skills required by the domain’s technology stack. Ultimately, this leads to a high churn of engineering staff, exacerbating the issue of too few data engineers.

The best way to do this is to have a central data engineering tribe, that all engineers belong to. Those engineers are then placed with the business domains to work on domain-specific projects. They stay with the domain for the duration of the project. Once the project is finished, they are then moved into another domain.

Such an approach ensures all engineers get to experience and enjoy the wide tech stack a federated data platform offers. This also ensures that data engineers have a good understanding of the data across all the domains, making them more effective.

The approach also inadvertently promotes reuse of data assets. Being aware of and using a data asset is much more likely to invoke reuse than searching for a data asset in a catalogue.

Did I mention training? Having a central place responsible for the data engineering learning and training ensures a fair, consistent and ‘best-of-breed’ approach to training.

Data Mesh Gotcha 4: data governance is not an ‘art’

The data mesh framework doesn’t address the first function that led to the downfall of the central IT approach: that being the onerous process, architecture and project management methodologies deployed to create and govern the data platforms.

Throwing off the shackles of heavy enterprise data architecture, top down design and project management are imperative to avoid the mistakes and failings of previous data platforms and architecture frameworks. Conversely, we need to have the right level of control in our governance bodies, neither too rigid or too loosely defined.

Architecture rigour and design, standards, delivery and BAU management are still needed and are vital for the success of a domain’s data product. This all needs to be clearly defined in the framework. Otherwise, the architecture will fail. It is more than an ‘art’ as defined in the data mesh, it is a prescriptive science that can and should be qualified and quantified.

Data Mesh Gotcha 5: data bottlenecks still remain

The data mesh defines that the domains are owners of their data, they author and control it as a data product. This in principle is sound. However, the ‘scope’ of that data product in the current guise of a data mesh is a potential Gotcha.

The data mesh’s data product principle describes each domain as having ‘data product developer roles’. These developers are being tasked with designing, creating and maintaining the domain’s data products, based upon its enterprise user requirements.

The issue of ‘scope’ with the above statement is that it implies that these developers/data engineers are responsible for meeting all enterprise-wide user data requirements for their respective domain. The obvious issues with this are as follows:

- Promotes the paradigm of data engineering dependency and risks around availability, cost and time that we all know about.

- Impedes data democracy at the data user level (i.e. data analysts and data scientists). We should enable them to discover data (at the correct grain) from different domains and generate new data, for analytical purposes themselves, without having to rely on data engineers.

Data Mesh Gotha 6: my data domain comes first

Another issue born from the ‘data product scope’, is business domain prioritisation. Business domains are laser-focussed on their business objectives. We often use the phrase, ‘business users don’t care how the data gets there, as long as it gets there, at the right time and with the expected quality’. By extension this is also true at the business domain level.

To be clear, the business user and hence the business domain is primarily concerned with the data it needs to serve its own domain: data that will better the business domain with respect to its own strategic objectives. This means that the budgets, timescales and resource availability at the business domain are prioritised and as such leave little room or incentive for them to be better ‘data citizens’ and provide a data product that serves not just their needs but the needs of the other business domains as well.

Consider the following use case: business domain A with finite data engineering resources needs an urgent new feature, while domain B also needs an urgent new feature on domain A’s data. Naturally, domain A’s feature will be prioritised first, potentially to the detriment of domain B.

The above conflicting interests can be avoided by bounding the data products scope to a granularity level: if domain A maintained its data product at an appropriate granularity (usually the rate of change afforded by its current business and system processes – ideally the lowest grain) then domain B (with appropriate access) could then create its own data classes on top of this (I will describe this in detail in a later article). As a result, the above issue is resolved, making both domains ‘happy customers’.

All of the above is not to say the premise of the data mesh is doomed. There are architectural fixes for all of the above gotchas (some have already been mentioned in this article).

Domain data federation is a very sound approach and should definitely be considered by organisations to drive their data estates, providing the above mentioned Gotchas are addressed appropriately.

In our follow up articles we will define in detail how to address the Gotchas and ensure the ‘spirit’ of a data mesh can be delivered successfully and cost effectively.

Streaming Data Quality That Won’t Break Pipelines

Streaming Without the Sting: Data Quality Rules That Never Break the Flow 💻 Did you know? A single minute of downtime in a high-velocity streaming environment can result in the loss of millions of data points, potentially costing a business thousands of pounds in missed opportunities or regulatory fines. — Defining Resilient Streaming Quality Data quality in

Schema Drift: The Silent Killer of Data Pipelines

The Silent Pipeline Killer: Surviving Schema Drift in the Wild 📊 Did you know? In the early days of big data, a single column change in a source database could trigger a “data graveyard” effect, where downstream analytics remained broken for weeks. The silent pipeline killer Schema drift occurs when the structure of source data changes

Preventing Data Drift in Modern Data Systems

The Invisible Erosion: Detecting and Managing Data Drift in Modern Architectures 📊 Did you know? According to recent industry surveys, over 70% of organisations experience significant data drift within the first six months of deploying a production system. The Concept of Data Drift Data drift occurs when the statistical properties or the underlying structure of incoming data change

Stream Database Changes to Your Lakehouse with CDC

Zero-Lag Operations: Stream Database Changes to Your Lakehouse 💾 Did you know? The “data downtime” caused by traditional batch processing costs the average enterprise approximately £12,000 per minute. The Concept: Moving at the Speed of Change Zero-lag operations rely on a transition from periodic “snapshots” to continuous “streams.” Instead of moving massive blocks of data at

Real-Time Salesforce CDC to Snowflake

Real-Time CDC: Keep Salesforce and Snowflake in Perfect Sync 🔎 Did you know? While many businesses still rely on nightly batch windows to move CRM data, Salesforce generates millions of events every hour. The Concept: Real-Time CDC Real-Time Change Data Capture (CDC) is a software design pattern used to determine and track data that has

Build Production Spark Pipelines—No Scala Needed

Democratising Spark: How IOblend enables Data Analysts to build production-grade Spark pipelines without writing Scala or Java Did You Know? The average enterprise now manages over 350 different data sources, yet nearly 70% of data leaders report feeling “trapped” by their own infrastructure. The Concept: Democratising the Spark Engine At its core, Apache Spark is a lightning-fast, distributed computing