Airline data is very rich and comes from a multitude of sources. That data is often siloed, difficult to access and tends to be manually collated and curated by the SMEs. Why?

❖ Airlines work with multiple separate systems (Lido/AIMS/ACARS/Navitaire/AMOS/TM1/Sabre/CRM/FMS/FRMS/etc), that do not “talk” to each other and do not provide standardised data access for analytics. On top of that, you get a myriad of files, documents and reports spread across the entire organisation.

❖ You have created processes and workarounds to access and work with that information, often in the form of various spreadsheets, external narrow-focus solutions or grassroots SQL tables. A spaghetti of pipelines, tables and documents.

❖ As such, your analysts and engineers spend significant time and effort just locating, preparing and validating relevant data before they are able to focus on insights for any side projects or any analysis involving new sources of data for them.

❖ Larger scope data initiatives are hard for analysts to implement themselves. Things like attempting a productionised data integration (creating a single source lake/warehouse) or developing a new automated app/dashboard/report that collates multiple data sources. This requires extensive IT involvement, time and cost. Often, such initiatives need to go through layers of scoping, procurement, budget approvals, resource sign-offs, and lengthy development times.

What it means is that your business spends a lot of time waiting for things to happen. Rather than working with the powerful data insights, you are hindered by the legacy processes and technologies. And when you attempt to address these, you normally face large IT bills and long development times.

Ultimately, you are missing valuable opportunities to improve revenue, reduce costs or enhance operational efficiency.

Here’s one example when doing things differently can help you unlock value.

Fuel management and optimisation is paramount for airlines as fuel is one of its biggest expenses – 15-30% of total depending on equipment and network. In addition, airlines have a legal obligation to monitor and report its emissions very accurately based on the fuel consumption (EU ETS).

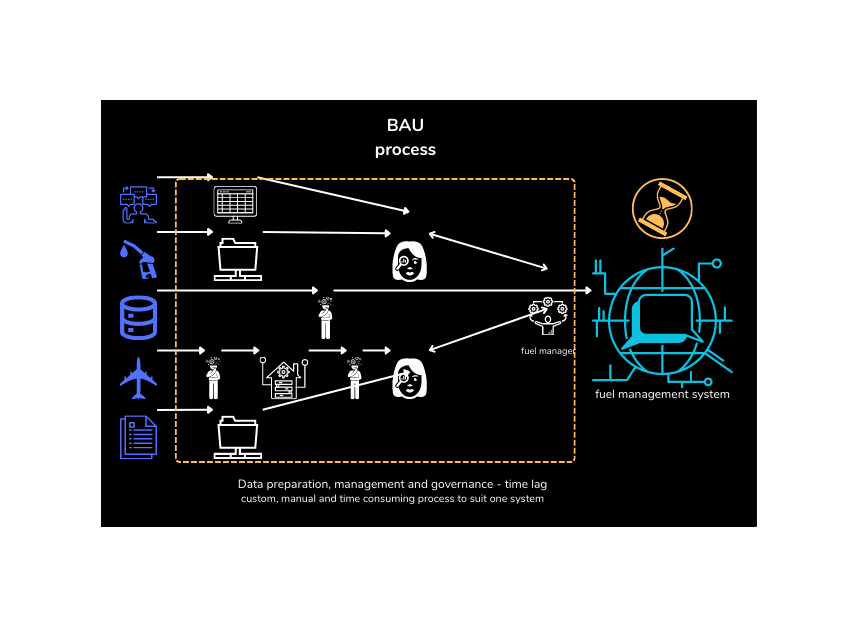

Process as is:

❖ Most airlines will have some form of fuel management system in place (integrated solutions or in-house tools) to drive fuel saving initiatives, such as single engine taxi, min uplift, tankering, optimised flight paths, pilot training, etc.

❖ On top of that, airlines will collate and report emissions.

❖ Fuel data comes from a number of sources that needs to be recorded, checked and reconciled: aircraft systems, flight planning, DCS, flight logs and fueller.

❖ A fuel manager (software and/or person) would process this data as it arrives in periodic batches and varying formats and compile into standardised reports, which are then used for reporting, insights and financials. The data is not used for real time monitoring, planning and forecasting because it is always in arrears. There is no real time capability.

❖ Further checks are performed by the fuel procurement/finance team, when the fuel bill is compared against what was uplifted and used.

❖ Data completeness and quality issues need to be investigated manually and are always time-consuming. Why is this record missing? Why was this uplift different from plan? Is this number in kilos, gallons or lbs?

❖ Implementing and operating a fuel management system is a time consuming and expensive exercise. Once you set one up, it is rigidly coded to your spec. It is very difficult to evolve functionality, upgrade or switch to another system if your requirements shift.

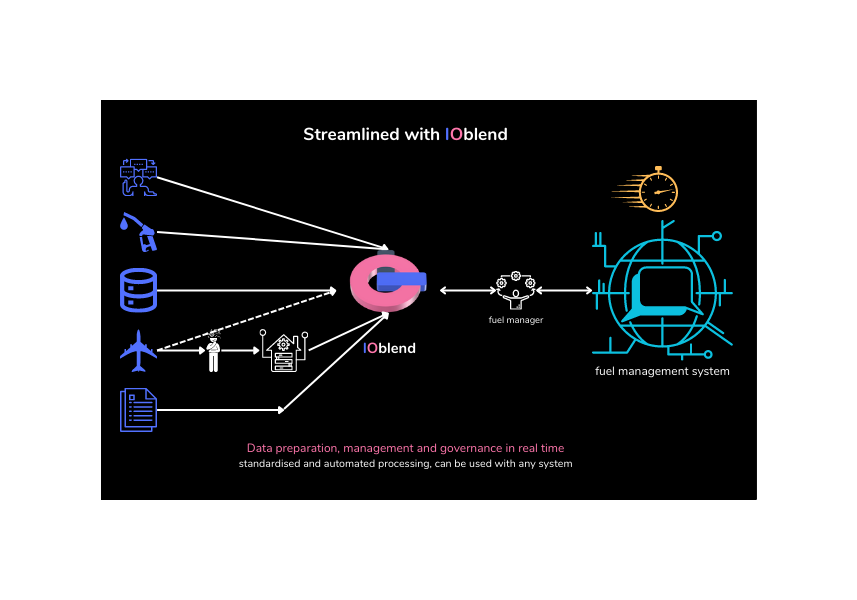

To alleviate this situation, you need an automated underlying layer that manages all your operational data in a standardised manner, with connections to your data sources and fuel management systems. Not another lake or a warehouse, but a flexible and cost effective DataOps platform that can make use of your existing systems and capabilities. IOblend was designed just for such tasks.

Process using IOblend:

❖ IOblend extracts digital data automatically regardless of the source (files, systems, tables, documents) independent of the fuel management solution. Add and remove sources as you see fit.

❖ The data is monitored in real time, providing you with the full visibility of “who/what/where/when/why”. You control your data, business logic and security protocols and expose only the data you need to the external fuel management systems.

❖ The SMEs apply desired business logic with SQL when setting up the process initially (or amending later for new insights). There is no need to code the dataflows manually, ever. You can easily update requirements without necessitating costly rework of the logic by the engineers.

❖ IOblend automatically processes all relevant data, validates and reconciles it against quality metrics. The SMEs only get involved by exception to do thorough investigations, deep dives and analysis of the outliers.

❖ IOblend enables you to work with the fuel data in real time. We stream all records as they arrive, giving you the power of proactive analytics – update your plans on the fly. Proactive fuel management can deliver additional savings to your bottom line.

❖ Data logic and schemas are easily transferable to any external analytics tools as required, so you can easily upgrade systems to grow with your business. There is no need to buy additional data management or ETL tools or re-code new data pipelines.

❖ And the best part is that since IOblend is a DataOps platform, not a one-function tool, you can apply the same software to all other data areas in your organisation at no additional cost!

One software for all your data engineering and data management needs.

Get in touch today and we’ll show you how you can step-change your data capabilities.

Continuous Data Replication for DR and Continuity

Continuous Data Replication: for Business Continuity and DR 📝 Did you know? According to industry studies, the average cost of IT downtime is approximately £4,500 per minute. For a large enterprise, a single hour of data loss or system unavailability can translate into millions in lost revenue, legal penalties, and irreparable brand damage. The Pulse of

Smart Meter Data: Billing to Forecasting

Utilities: Smart Meter Data to Billing and Demand Forecasting 📋 Did You Know? The global roll-out of smart meters generates more data in a single day than most utility companies used to collect in an entire decade. While traditional meters were read once a month, or even once a quarter, smart meters transmit data at intervals

SCADA Streams to Reliability Analytics

Energy: SCADA Streams to Reliability Analytics 🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a

Building Live ETA Pipelines for Fleet Operations

Logistics: Live ETA Prediction Pipelines from Fleet + Orders 🚚 Did you know? The “Last Mile” is famously the most expensive and inefficient part of the supply chain, often accounting for up to 53% of total shipping costs. The Evolution of Real-Time Logistics Live ETA (Estimated Time of Arrival) prediction pipelines represent the shift from reactive

DB2 CDC to Lakehouse Without Re-Platforming

From DB2 to Lakehouse: Real-Time CDC Without Re-Platforming 💻 Did you know? Mainframe systems like DB2 still process approximately 30 billion business transactions every single day. Despite the rush toward modern cloud architectures, the world’s most critical financial and logistical data often resides in these “legacy” environments, making them the silent engines of the global economy.

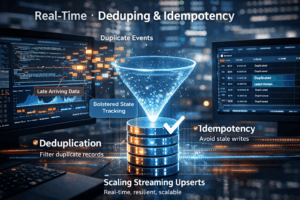

Real-Time Upserts: Deduping and Idempotency

Streaming Upserts Done Right: Deduping and Idempotency at Scale 💻 Did you know? In many high-velocity streaming environments, the “same” event can be sent or processed multiple times due to network retries or distributed system failures. The Art of the Upsert At its core, a streaming upsert (a portmanteau of “update” and “insert”) is the process of synchronising incoming data with an existing