Real-time Data Integration

IOblend:

- Supports real-time, production-grade data pipelines using Apache Spark with proprietary tech enhancements.

- Can integrate equally streaming (transactional event) and batch data due to its Kappa architecture.

Talend:

- Offers real-time data integration features but relies on a combination of batch and real-time processing.

Low-code/No-code Development

IOblend:

- Provides low-code/no-code development, accelerating data migration and reducing manual data wrangling.

Talend:

- Features a drag-and-drop designer for designing data integration and ETL processes but might require more configuration and scripting for certain complex tasks.

Data Architecture:

IOblend:

- Enables delivery of both centralized and federated data architectures.

Talend:

- Primarily based on a centralized data architecture, although it can support federated designs with appropriate configurations.

Performance & Scalability:

IOblend:

- Boasts low-latency, massively parallelized data processing with speeds exceeding 10 million transactions per second.

Talend:

- Provides scalable data integration solutions, but performance can vary based on the underlying infrastructure and configuration.

Partnerships & Cloud Integration:

IOblend:

- Has real-time integration capabilities with Snowflake, AWS, Google Cloud and Azure products and is an ISV technology partner with Snowflake and Microsoft.

Talend:

- Offers cloud integration with various platforms including AWS, Google Cloud, Azure, and Snowflake, among others.

User Interface & Design:

IOblend:

- Comprises of two functional parts: IOblend Designer and IOblend Engine.

- IOblend Designer is for designing, building, and testing data pipeline DAGs.

- IOblend Engine performs the calculations and can be flexibly deployed on-prem, cloud or dev machines via containers

Talend:

- Provides a unified studio for designing and executing data integration jobs.

Data Management & Governance:

IOblend:

- Manages data throughout its journey with features like record-level lineage, CDC, metadata, schema, de-duping, cataloguing, etc.

- All as part of each data pipeline automatically (flexible configurations). No need to purchase additional modules.

Talend:

- Also offers robust data governance and data quality tools, but the features may differ in implementation and granularity.

Cost & Licensing:

IOblend:

- The Developer Edition is free, whereas the Enterprise Suite requires a paid annual license.

Talend:

- Provides a free community version (Talend Open Studio) and has premium versions that come at a cost.

Deployment & Flexibility:

IOblend:

- Can operate on any cloud, on-prem, and hybrid environment.

- Comes in two flavours: Developer Edition and Enterprise Edition.

Talend:

- Flexible deployment options across cloud and on-prem environments.

Community & Support:

IOblend:

- As a relatively new solution, the community is still small. Developer Edition support is online. Enterprise Edition receive premium support.

Talend:

- Has a large community (Talend Open Studio) and offers premium support for its enterprise users.

In conclusion, IOblend focuses on real-time data integration with low-code/no-code solutions using Apache Spark and is tailored for more modern data needs, especially in operational analytics.

On the other hand, Talend, being a more established player, offers a wide range of features suitable for various integration scenarios. The choice between the two will depend on the specific needs, infrastructure, and preferences of the enterprise.

Continuous Data Replication for DR and Continuity

Continuous Data Replication: for Business Continuity and DR 📝 Did you know? According to industry studies, the average cost of IT downtime is approximately £4,500 per minute. For a large enterprise, a single hour of data loss or system unavailability can translate into millions in lost revenue, legal penalties, and irreparable brand damage. The Pulse of

Smart Meter Data: Billing to Forecasting

Utilities: Smart Meter Data to Billing and Demand Forecasting 📋 Did You Know? The global roll-out of smart meters generates more data in a single day than most utility companies used to collect in an entire decade. While traditional meters were read once a month, or even once a quarter, smart meters transmit data at intervals

SCADA Streams to Reliability Analytics

Energy: SCADA Streams to Reliability Analytics 🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a

Building Live ETA Pipelines for Fleet Operations

Logistics: Live ETA Prediction Pipelines from Fleet + Orders 🚚 Did you know? The “Last Mile” is famously the most expensive and inefficient part of the supply chain, often accounting for up to 53% of total shipping costs. The Evolution of Real-Time Logistics Live ETA (Estimated Time of Arrival) prediction pipelines represent the shift from reactive

DB2 CDC to Lakehouse Without Re-Platforming

From DB2 to Lakehouse: Real-Time CDC Without Re-Platforming 💻 Did you know? Mainframe systems like DB2 still process approximately 30 billion business transactions every single day. Despite the rush toward modern cloud architectures, the world’s most critical financial and logistical data often resides in these “legacy” environments, making them the silent engines of the global economy.

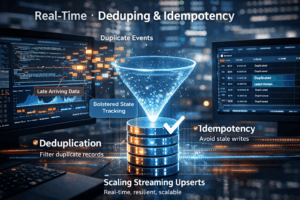

Real-Time Upserts: Deduping and Idempotency

Streaming Upserts Done Right: Deduping and Idempotency at Scale 💻 Did you know? In many high-velocity streaming environments, the “same” event can be sent or processed multiple times due to network retries or distributed system failures. The Art of the Upsert At its core, a streaming upsert (a portmanteau of “update” and “insert”) is the process of synchronising incoming data with an existing