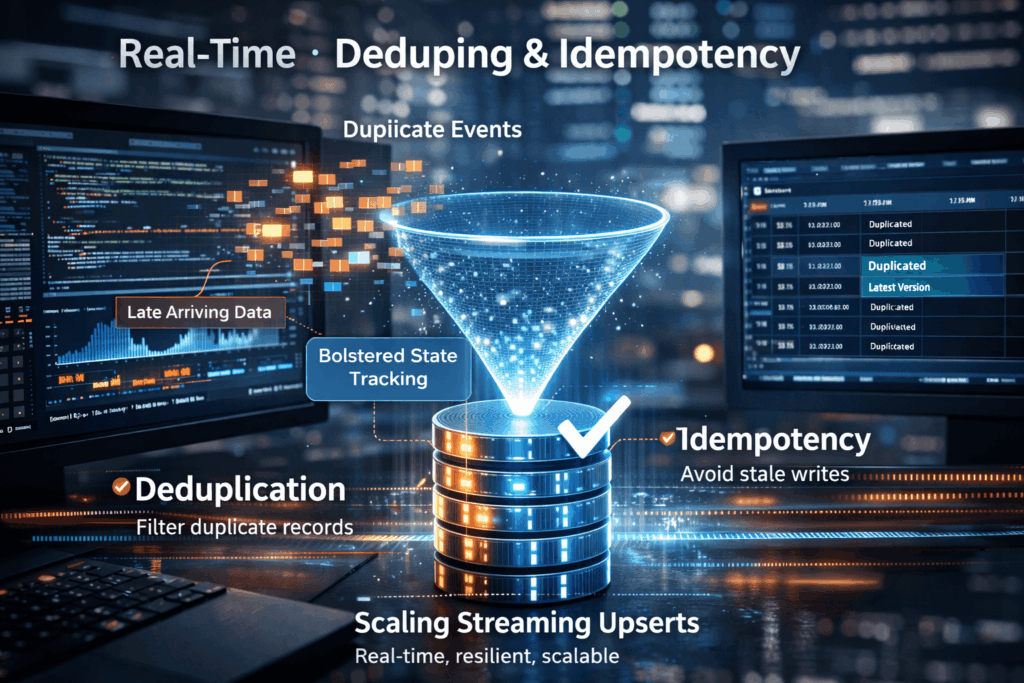

Streaming Upserts Done Right: Deduping and Idempotency at Scale

💻 Did you know? In many high-velocity streaming environments, the “same” event can be sent or processed multiple times due to network retries or distributed system failures.

The Art of the Upsert

At its core, a streaming upsert (a portmanteau of “update” and “insert”) is the process of synchronising incoming data with an existing dataset in real time. If a record with a specific primary key already exists, it is updated; if not, it is created.

To do this “right” at scale, two concepts are non-negotiable:

Deduplication: Removing identical redundant records before they hit the storage layer.

Idempotency: Ensuring that performing an operation multiple times has the same effect as performing it once.

The Scalability Wall: Why Businesses Struggle

Most businesses start with simple batch updates, but as they move toward real-time insights, they hit a wall. In a distributed stream (like Kafka or Kinesis), data rarely arrives in the correct order. This leads to several critical issues:

- Late-Arriving Data: An older version of a customer’s profile might arrive after a newer version. If the system blindly upserts, it “downgrades” the data to an incorrect, stale state.

- The “Double Bubble” Problem: During system spikes or restarts, producers often resend batches. Without a robust state store to track what has already been processed, the downstream database suffers from bloated storage and inaccurate analytics.

- Performance Bottlenecks: Checking for the existence of a record in a multi-terabyte table before every single write is computationally expensive. Traditional databases often crawl to a halt under the high-IOPS (Input/Output Operations Per Second) demand of a true streaming upsert.

Mastering the Stream with IOblend

IOblend solves the complexity of streaming upserts by shifting the heavy lifting away from the database and into a high-performance, “AI-Forward” data engineering tier.

Instead of writing complex, custom Spark or Flink scripts to manage state and watermarking, IOblend provides a unified interface to handle real-time data synchronisation. It natively manages:

- Automated Deduplication: Identifying and discarding redundant events at the ingestion point to save on downstream costs.

- Stateful Processing: Ensuring idempotency by keeping track of the latest version of every record, regardless of the order in which they arrive.

- Schema Evolution: Seamlessly handling changes in data structure without breaking the streaming pipeline.

By using IOblend’s advanced CDC (Change Data Capture) and streaming capabilities, businesses can move from fragile, “bolt-on” deduplication to a resilient, enterprise-grade data mesh that guarantees accuracy at any scale.

Don’t let duplicate data dilute your insights, streamline your future with IOblend.

Continuous Data Replication for DR and Continuity

Continuous Data Replication: for Business Continuity and DR 📝 Did you know? According to industry studies, the average cost of IT downtime is approximately £4,500 per minute. For a large enterprise, a single hour of data loss or system unavailability can translate into millions in lost revenue, legal penalties, and irreparable brand damage. The Pulse of

Smart Meter Data: Billing to Forecasting

Utilities: Smart Meter Data to Billing and Demand Forecasting 📋 Did You Know? The global roll-out of smart meters generates more data in a single day than most utility companies used to collect in an entire decade. While traditional meters were read once a month, or even once a quarter, smart meters transmit data at intervals

SCADA Streams to Reliability Analytics

Energy: SCADA Streams to Reliability Analytics 🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a

Building Live ETA Pipelines for Fleet Operations

Logistics: Live ETA Prediction Pipelines from Fleet + Orders 🚚 Did you know? The “Last Mile” is famously the most expensive and inefficient part of the supply chain, often accounting for up to 53% of total shipping costs. The Evolution of Real-Time Logistics Live ETA (Estimated Time of Arrival) prediction pipelines represent the shift from reactive

DB2 CDC to Lakehouse Without Re-Platforming

From DB2 to Lakehouse: Real-Time CDC Without Re-Platforming 💻 Did you know? Mainframe systems like DB2 still process approximately 30 billion business transactions every single day. Despite the rush toward modern cloud architectures, the world’s most critical financial and logistical data often resides in these “legacy” environments, making them the silent engines of the global economy.

Real-Time Upserts: Deduping and Idempotency

Streaming Upserts Done Right: Deduping and Idempotency at Scale 💻 Did you know? In many high-velocity streaming environments, the “same” event can be sent or processed multiple times due to network retries or distributed system failures. The Art of the Upsert At its core, a streaming upsert (a portmanteau of “update” and “insert”) is the process of synchronising incoming data with an existing