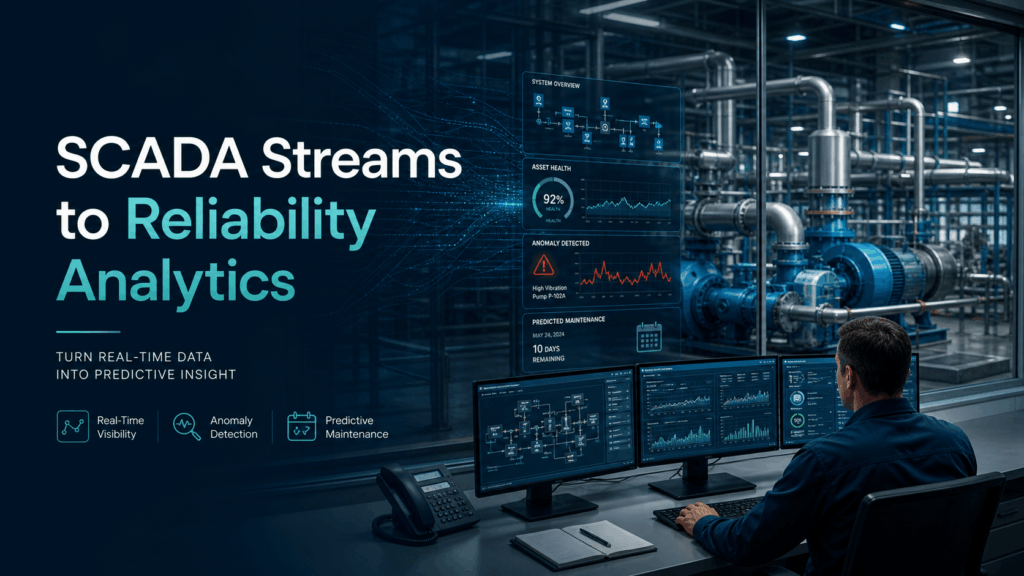

Energy: SCADA Streams to Reliability Analytics

🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a digital graveyard rather than a source of operational intelligence.

From Streams to Insights

At its core, the transition from SCADA (Supervisory Control and Data Acquisition) streams to reliability analytics is about turning raw telemetry into foresight. SCADA systems are excellent at real-time monitoring, telling you if a breaker is open or a turbine is spinning. Reliability analytics, however, looks at the “health” of the asset over time. It consumes high-frequency streams of temperature, vibration, and voltage to predict when a component will fail before it actually does, moving the industry from reactive repairs to proactive maintenance.

The Data Engineering Bottleneck

For most energy providers, the hurdle isn’t a lack of data, but the sheer complexity of moving it. SCADA data is notoriously difficult to work with; it often arrives in proprietary formats, via slow polling intervals, or through legacy protocols that don’t play well with modern cloud environments.

Data teams face “The Three Silos”:

- Format Fragmentation: Mixing time-series data from sensors with relational data from Enterprise Asset Management (EAM) systems.

- Latency Gaps: By the time a data engineer builds a manual pipeline to clean the SCADA noise, the “real-time” window for preventing a transformer blowout has already closed.

- Governance Debt: Ensuring that sensitive grid data remains encrypted and compliant while moving across pipelines is often a manual, error-prone process.

How IOblend Turns SCADA Data Into Operational Foresight

This is where IOblend changes the economics of reliability analytics. Most energy data projects do not fail because the analytics models are weak. They fail because the data arrives late, fragmented, poorly governed, or trapped inside legacy SCADA and operational systems.

IOblend removes that bottleneck by turning complex data engineering into reusable, controlled pipeline logic. Instead of spending months hand-coding fragile ETL jobs for every new asset, site, protocol, or system, teams can build governed, repeatable data flows that move SCADA telemetry, asset records, maintenance data, and operational events into analytics-ready environments faster.

- Real-time streams, not stale snapshots

IOblend supports real-time and event-driven ingestion, including CDC and streaming patterns, so reliability models can work from current operational signals rather than yesterday’s batch exports. That matters when the difference between prevention and failure is measured in minutes, not reporting cycles. - SCADA plus context, not SCADA in isolation

Raw sensor data alone rarely explains asset risk. IOblend helps combine high-frequency SCADA streams with enterprise data from EAM, ERP, maintenance logs, inspections, and operational systems, giving reliability teams the full context behind performance degradation, recurring faults, and early warning patterns. - Governed pipelines by design

Critical infrastructure data needs control, traceability, and security from the start. IOblend brings governance into the pipeline layer with validation, lineage, exception handling, and privacy-aware controls, so teams can scale analytics without creating a shadow estate of unmanaged scripts and risky data movement. - Built for change, not brittle integrations

Energy environments change constantly: new assets, new schemas, new sensors, new reporting requirements, and new analytics platforms. IOblend’s metadata-driven architecture makes pipeline logic reusable and easier to adapt, reducing the cost of change compared with hard-coded integrations. - Flexible across modern data platforms

Whether the target is Spark, Snowflake, Databricks, a lakehouse, warehouse, or operational analytics layer, IOblend provides a controlled framework for building and executing pipelines without locking teams into one rigid architecture.

The result is a faster path from SCADA visibility to reliability intelligence: fewer brittle pipelines, less manual engineering, stronger governance, and analytics teams that can focus on preventing failures instead of constantly repairing the data layer.

Ready to stop managing pipelines and start mastering reliability? Supercharge your energy data strategy with IOblend.

Continuous Data Replication for DR and Continuity

Continuous Data Replication: for Business Continuity and DR 📝 Did you know? According to industry studies, the average cost of IT downtime is approximately £4,500 per minute. For a large enterprise, a single hour of data loss or system unavailability can translate into millions in lost revenue, legal penalties, and irreparable brand damage. The Pulse of

Smart Meter Data: Billing to Forecasting

Utilities: Smart Meter Data to Billing and Demand Forecasting 📋 Did You Know? The global roll-out of smart meters generates more data in a single day than most utility companies used to collect in an entire decade. While traditional meters were read once a month, or even once a quarter, smart meters transmit data at intervals

SCADA Streams to Reliability Analytics

Energy: SCADA Streams to Reliability Analytics 🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a

Building Live ETA Pipelines for Fleet Operations

Logistics: Live ETA Prediction Pipelines from Fleet + Orders 🚚 Did you know? The “Last Mile” is famously the most expensive and inefficient part of the supply chain, often accounting for up to 53% of total shipping costs. The Evolution of Real-Time Logistics Live ETA (Estimated Time of Arrival) prediction pipelines represent the shift from reactive

DB2 CDC to Lakehouse Without Re-Platforming

From DB2 to Lakehouse: Real-Time CDC Without Re-Platforming 💻 Did you know? Mainframe systems like DB2 still process approximately 30 billion business transactions every single day. Despite the rush toward modern cloud architectures, the world’s most critical financial and logistical data often resides in these “legacy” environments, making them the silent engines of the global economy.

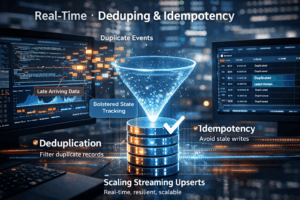

Real-Time Upserts: Deduping and Idempotency

Streaming Upserts Done Right: Deduping and Idempotency at Scale 💻 Did you know? In many high-velocity streaming environments, the “same” event can be sent or processed multiple times due to network retries or distributed system failures. The Art of the Upsert At its core, a streaming upsert (a portmanteau of “update” and “insert”) is the process of synchronising incoming data with an existing