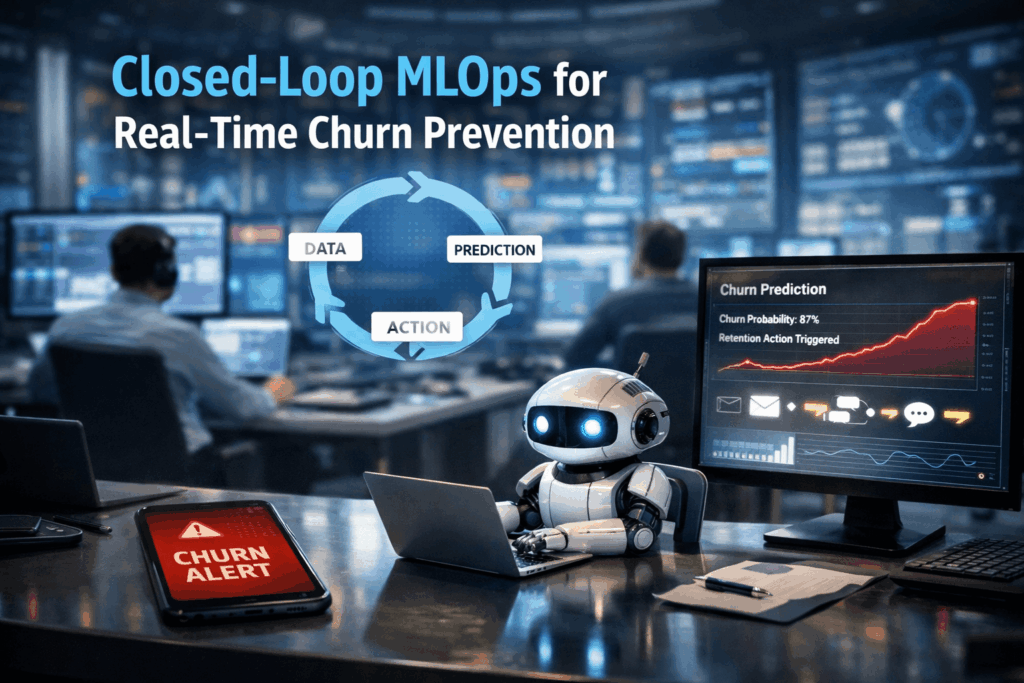

Churn Prevention: Building “closed-loop” MLOps systems that predict churn and trigger automated retention agents

🔗 Did you know? In the telecommunications and subscription-based sectors, a mere 5% increase in customer retention can lead to a staggering profit surge of more than 25%.

Closed-Loop MLOps

A “closed-loop” MLOps system is an advanced architectural pattern that transcends simple predictive analytics. While standard machine learning models might output a list of high-risk customers for a weekly review, a closed-loop system functions as an autonomous nervous system. It continuously ingests real-time data, calculates “fresh” behavioral features, generates churn probabilities, and crucially, triggers automated “retention agents” or downstream APIs to intervene instantly. It is the bridge between knowing a customer might leave and doing something about it before they do.

The Persistence of Churn Latency

Modern businesses are drowning in data but starving for timely action. The primary issue is data latency: the gap between a customer showing signs of dissatisfaction (such as decreased app login frequency or failed payment attempts) and the business responding. Traditional batch-processed pipelines often take 24–48 hours to refresh, by which time a competitor’s “welcome” email has already been opened.

Furthermore, engineering these systems often requires a fragmented tech stack: separate tools for ingestion, feature stores for serving, and complex custom code to trigger actions. This fragmentation leads to “training-serving skew,” where the logic used to train the model doesn’t match the live data, resulting in inaccurate predictions and wasted retention spend on the wrong customers.

How IOblend Solves the Loop

IOblend eliminates the friction of building these complex systems by providing a unified, production-grade DataOps and MLOps environment.

Real-Time Feature Engineering: IOblend acts as a “Feature Store without the Store.” It embeds feature engineering directly into your pipelines, allowing for sub-second freshness (P99 latency) without requiring separate infrastructure like Redis or Feast.

From Inference to Action: Beyond just serving features, IOblend allows you to capture model outputs and immediately generate AI agents or trigger automated actions.

Kappa Architecture at Scale: By utilizing a streaming-first Spark engine, IOblend handles over 1 million transactions per second. This allows you to monitor millions of customers simultaneously, ensuring no “silent” churn signal goes unnoticed.

Eliminating Tool Sprawl: With its low-code Designer and automated governance, IOblend replaces the need for disparate ETL tools, feature registries, and monitoring suites, keeping your entire retention loop inside your own secure environment.

Close the gap on customer loss and accelerate your retention intelligence with IOblend.

IOblend presents a ground-breaking approach to IoT and data integration, revolutionizing the way businesses handle their data. It’s an all-in-one data integration accelerator, boasting real-time, production-grade, managed Apache Spark™ data pipelines that can be set up in mere minutes. This facilitates a massive acceleration in data migration projects, whether from on-prem to cloud or between clouds, thanks to its low code/no code development and automated data management and governance.

IOblend also simplifies the integration of streaming and batch data through Kappa architecture, significantly boosting the efficiency of operational analytics and MLOps. Its system enables the robust and cost-effective delivery of both centralized and federated data architectures, with low latency and massively parallelized data processing, capable of handling over 10 million transactions per second. Additionally, IOblend integrates seamlessly with leading cloud services like Snowflake and Microsoft Azure, underscoring its versatility and broad applicability in various data environments.

At its core, IOblend is an end-to-end enterprise data integration solution built with DataOps capability. It stands out as a versatile ETL product for building and managing data estates with high-grade data flows. The platform powers operational analytics and AI initiatives, drastically reducing the costs and development efforts associated with data projects and data science ventures. It’s engineered to connect to any source, perform in-memory transformations of streaming and batch data, and direct the results to any destination with minimal effort.

IOblend’s use cases are diverse and impactful. It streams live data from factories to automated forecasting models and channels data from IoT sensors to real-time monitoring applications, enabling automated decision-making based on live inputs and historical statistics. Additionally, it handles the movement of production-grade streaming and batch data to and from cloud data warehouses and lakes, powers data exchanges, and feeds applications with data that adheres to complex business rules and governance policies.

The platform comprises two core components: the IOblend Designer and the IOblend Engine. The IOblend Designer is a desktop GUI used for designing, building, and testing data pipeline DAGs, producing metadata that describes the data pipelines. The IOblend Engine, the heart of the system, converts this metadata into Spark streaming jobs executed on any Spark cluster. Available in Developer and Enterprise suites, IOblend supports both local and remote engine operations, catering to a wide range of development and operational needs. It also facilitates collaborative development and pipeline versioning, making it a robust tool for modern data management and analytics

Streaming Predictive MX: Drift-Aware Inference

Predictive Maintenance 2.0: Feeding real-time sensor drifts directly into inference models using streaming engine 🔩 Did you know? The cost of unplanned downtime for industrial manufacturers is estimated at nearly £400 billion annually. Predictive Maintenance 2.0: The Real-Time Evolution Predictive Maintenance 2.0 represents a paradigm shift from batch-processed diagnostics to live, autonomous synchronisation. In the traditional 1.0

Beyond Micro-Batching: Continuous Streaming for AI

Beyond Micro-batching: Why Continuous Streaming Engine is the Future of “Fresh Data” for AI 💻 Did you know? Most modern “real-time” AI applications are actually running on data that is already several minutes old. Traditional micro-batching collects data into small chunks before processing it, introducing a “latency tax” that can render predictive models obsolete before they

ERP Cloud Migration With Live Data Sync

Seamless Core System Migration: The Move of Large-Scale Banking and Insurance ERP Data to a Modern Cloud Architecture ⛅ Did you know that core system migrations in large financial institutions, which typically rely on manual data mapping and validation, often require parallel runs lasting over 18 months? The Core Challenge The migration of multi-terabyte ERP and

Legacy ERP Integration to Modern Data Fabric

Warehouse Automation Efficiency: Migrating and Integrating Legacy ERP Data into a Modern Big Data Ecosystem 📦 Did you know? Analysts estimate that warehouses leveraging robust, real-time data integration see inventory accuracy improvements of up to 99%. The Convergence of WMS and Big Data Data professionals in logistics face a profound challenge extracting mission-critical operational data such

Dynamic Pricing with Agentic AI

The Agentic Edge: Real-Time Dynamic Pricing through AI-Driven Cloud Data Integration 📊 Did You Know? The most sophisticated dynamic pricing systems can process and react to market signals in under 100 milliseconds. The Evolution of Value Optimisation Dynamic Pricing and Revenue Management (DPRM) is a complex computational science. At its core, DPRM aims to sell the right

Smarter Quality Control with Cloud + IOblend

Quality Control Reimagined: Cloud, the Fusion of Legacy Data and Vision AI 🏭 Did You Know? Over 80% of manufacturing and quality data is considered ‘dark’ inaccessible or siloed within legacy on-premises systems, dramatically hindering the deployment of real-time, predictive Quality Control (QC) systems like Vision AI. Quality Control Reimagined The core concept of modern quality

Advanced data integration solutions: IOblend vs Streamsets

IOblend and Streamsets are both advanced data integration platforms that cater to the growing needs of businesses, especially in real-time analytics use cases

Advanced Data Integration Solutions: IOblend vs Talend

IOblend and Talend, both are prominent data integration solutions, but they differ in various capabilities, functionalities, and user experiences.

Get to the Cloud Faster: Data Migration with IOblend

Data migration projects tend to put the fear of God into senior management. Cost and time and business disruption influence the adoption of the cloud strategies

Data Quality: Garbage Checks In, Your Wallet Checks Out

Data quality refers to accuracy, completeness, validity, consistency, uniqueness, timeliness, and reliability of data.

IOblend: State Management in Real-time Analytics

In real-time analytics, “state” refers to any information that an application remembers over time – i.e. intermediate data required to process data streams.

Data Lineage: A Data Governance Must Have

Data lineage is the backbone of reliable data systems. As businesses transition into data-driven entities, the significance of data lineage cannot be overlooked