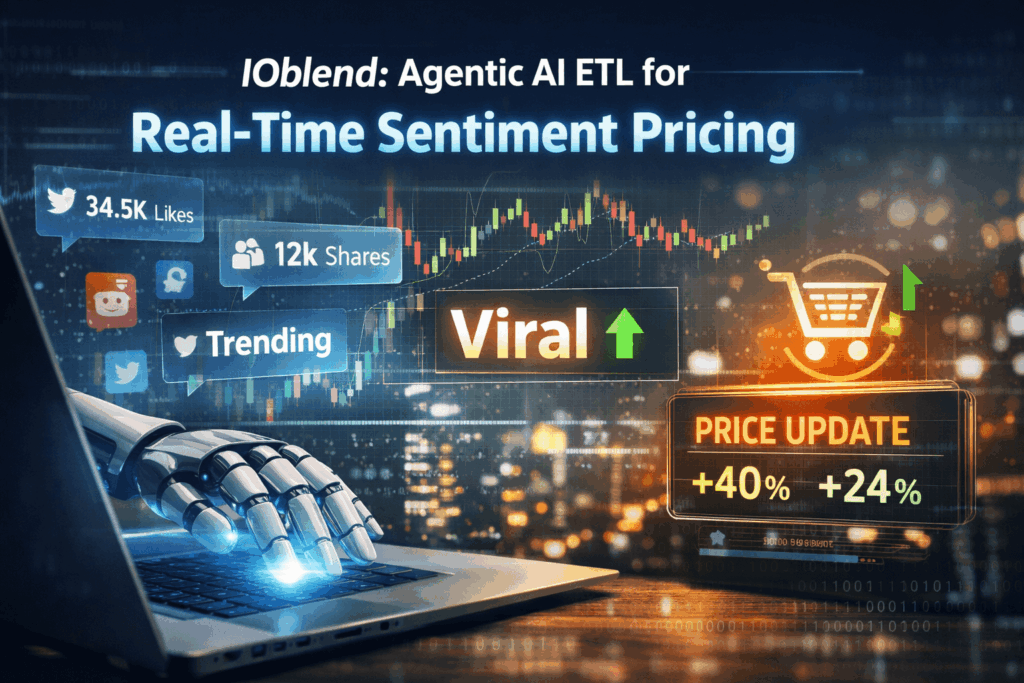

Sentiment-Driven Pricing: Using Agentic AI ETL to scrape social sentiment and adjust prices dynamically within the data flow

🤖 Did you know? A single viral tweet or a trending TikTok “dupe” video can alter the perceived value of a product by over 40% in less than six hours. Traditional pricing engines, which rely on historical sales data, often take days to catch up, costing retailers millions in missed margins or lost volume during the critical “hype window”.

The Concept: Sentiment-Driven Pricing

Sentiment-driven pricing is the evolution of dynamic cost models. Traditionally, prices fluctuate based on inventory levels or competitor benchmarks. However, by integrating Agentic AI into the ETL (Extract, Transform, Load) process, businesses can ingest unstructured social data tweets, Reddit threads, or TikTok trends and treat “public mood” as a primary data variable. The AI agents don’t just move data; they interpret the emotional intensity and urgency of the market, adjusting price points autonomously within the data pipeline.

The Friction: Why Static Models Fail

Data experts know the pain of “stale insights.” Most businesses operate on a lag; by the time social sentiment is scraped, cleaned, and visualised in a BI dashboard for a human to review, the market opportunity has often evaporated.

Key issues include:

- Latency: Traditional ETL batches are too slow for the velocity of social media.

- Contextual Blindness: Standard scripts struggle to distinguish between a “viral joke” and genuine “buying intent.”

- Pipeline Complexity: Maintaining separate flows for structured sales data and unstructured social sentiment creates a fragmented view of the truth.

- Manual Bottlenecks: Human-in-the-loop price adjustments cannot keep pace with 24/7 global digital discourse.

The IOblend Solution: Data Engineering at the Speed of Thought

This is where IOblend redefines the architecture. IOblend moves away from sluggish, rigid ETL to a fluid, metadata-driven approach that is perfect for Agentic AI workflows.

IOblend solves the sentiment-pricing gap by:

- Unified Processing: It seamlessly blends unstructured social feeds with structured SQL databases, allowing sentiment scores to act as immediate triggers for pricing logic.

- Real-time Velocity: IOblend’s “Data-at-Rest” is a thing of the past; its engine is designed for the high-frequency demands of dynamic pricing.

- No-Code Agility: Data experts can deploy complex logic without writing thousands of lines of brittle code, making the integration of AI agents into the flow remarkably simple.

- Cost Efficiency: By optimising how data is transformed, IOblend ensures that scraping massive social datasets doesn’t result in a prohibitive cloud bill.

Stop chasing trends and start pricing ahead of them, supercharge your data agility with IOblend.

De-Risk Cloud Migration with Parallel Runs

De-Risk Your Migration: Run Legacy and New Systems in Parallel 💻 Did you know? An alarming 83% of data migrations either fail outright or drastically overrun their budgets. When management loses patience with mounting technical friction, entire digital transformations are written off. Minimising the migration gamble To eliminate this operational hazard, running legacy and new systems in

Compliance DataOps for Auditable Pipelines

Compliance-Friendly DataOps: Repeatable, Reviewable, Versioned Pipelines 📓 Did you know? According to industry compliance reports, nearly 70% of businesses face difficulties tracing their data back to its raw origins during regular regulatory audits. The Concept of Compliance-Friendly DataOps Compliance-friendly DataOps represents an operational framework that embeds strict regulatory governance directly into the data engineering lifecycle. Instead of treating data auditing

Continuous Data Replication for DR and Continuity

Continuous Data Replication: for Business Continuity and DR 📝 Did you know? According to industry studies, the average cost of IT downtime is approximately £4,500 per minute. For a large enterprise, a single hour of data loss or system unavailability can translate into millions in lost revenue, legal penalties, and irreparable brand damage. The Pulse of

Smart Meter Data: Billing to Forecasting

Utilities: Smart Meter Data to Billing and Demand Forecasting 📋 Did You Know? The global roll-out of smart meters generates more data in a single day than most utility companies used to collect in an entire decade. While traditional meters were read once a month, or even once a quarter, smart meters transmit data at intervals

SCADA Streams to Reliability Analytics

Energy: SCADA Streams to Reliability Analytics 🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a

Building Live ETA Pipelines for Fleet Operations

Logistics: Live ETA Prediction Pipelines from Fleet + Orders 🚚 Did you know? The “Last Mile” is famously the most expensive and inefficient part of the supply chain, often accounting for up to 53% of total shipping costs. The Evolution of Real-Time Logistics Live ETA (Estimated Time of Arrival) prediction pipelines represent the shift from reactive