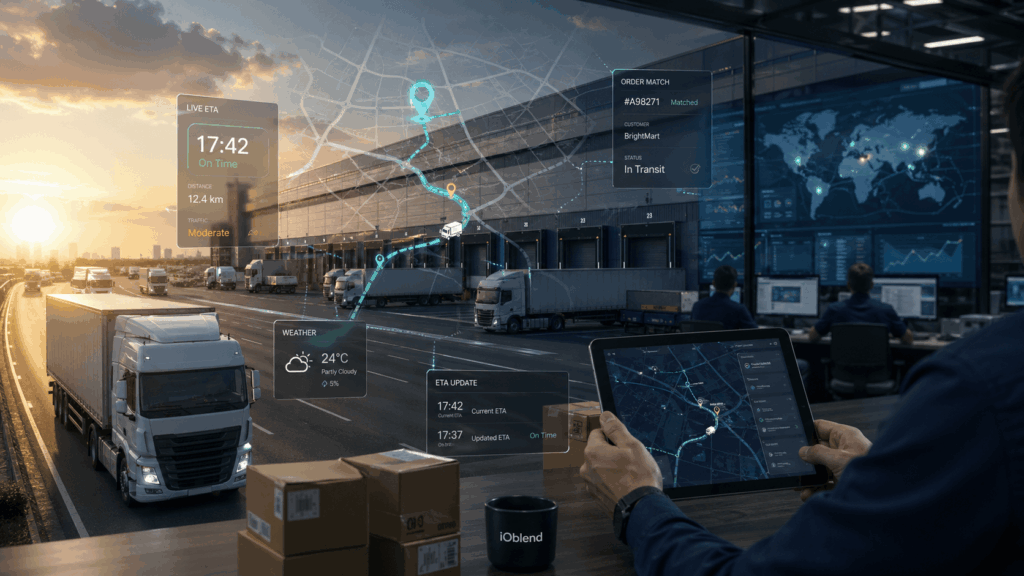

Logistics: Live ETA Prediction Pipelines from Fleet + Orders

🚚 Did you know? The “Last Mile” is famously the most expensive and inefficient part of the supply chain, often accounting for up to 53% of total shipping costs.

The Evolution of Real-Time Logistics

Live ETA (Estimated Time of Arrival) prediction pipelines represent the shift from reactive tracking to proactive orchestration. By fusing high-frequency telemetry data from vehicle fleets, such as GPS coordinates, engine diagnostics, and fuel consumption, with transactional order data and external variables like live traffic and weather, firms can create a dynamic digital twin of their entire logistics network. For data experts, this isn’t just about a timestamp; it’s about a continuous stream of state updates that allow for millisecond-level recalculations of delivery windows.

The Friction in the Pipeline

Building these systems is notoriously difficult due to the “velocity-variety” trap. Logistics data is inherently messy. Fleet telemetry often arrives via asynchronous MQTT streams, while order data might sit in a legacy SQL database or a modern ERP.

Common hurdles include:

- Schema Drift: When a telematics provider updates their sensor payload without notice, downstream prediction models often break silently.

- Late-Arriving Data: Handling out-of-order events from drivers moving through “dead zones” requires complex watermarking and state management.

- Feature Engineering at Scale: Calculating a “rolling average speed over the last 10 minutes” for 10,000 trucks simultaneously creates immense computational overhead.

- The Integration Gap: Most businesses struggle to join the inflight stream of a truck with the static metadata of the 500 parcels inside it, leading to “stale” predictions that frustrate end customers.

Synchronising the Stream with IOblend

This is where IOblend transforms the architectural approach. Rather than duct-taping disparate tools together, IOblend provides a unified environment to build robust DataOps pipelines that handle the rigours of live logistics.

IOblend’s platform excels at managing the complexity of real-time ETA engines:

- Unified Streaming & Batch: It seamlessly blends high-speed fleet telemetry with heavy-duty order history, ensuring your models always have the full context.

- Late Arriving Data: IOblend handles late arriving data automatically through metadata-driven rules for event time, watermarks, deduplication, controlled upserts, and selective reprocessing.

- Automated Schema Evolution: IOblend detects and manages changes in data structures automatically, preventing the pipeline failures that typically plague IoT-heavy sectors.

- Record-Level Lineage: In logistics, knowing why a prediction was wrong is as vital as the prediction itself. IOblend provides granular visibility into every data point’s journey.

- Resilient Data Engineering: By simplifying the deployment of complex transformations, IOblend allows data teams to focus on refining their ML models rather than managing infrastructure.

Stop chasing the clock and start commanding your data, deliver certainty at scale with IOblend.

Out with the Old ETL: Navigating the Upgrade Maze

Today, we have tools and experience to make digital transformation easy. Yet, most organisations cling to their antiquated data systems and analytics. Why?

Smart Data Integration: More $ for Your D&A Budget

Data integration is the heart of data engineering. The process is inherently complex and consumes the most of your D&A budget.

Data Pipelines: From Raw Data to Real Results

The primary purpose of data pipelines is to enable a smooth, automated flow of data. Data pipelines are at the core of informed decision-making.

Golden Record: Finding the Single Truth Source

A golden record of data is a consolidated dataset that serves as a single source of truth for all business data about a customer, employee, or product.

Penny-wise: Strategies for surviving budget cuts

Weathering budget cuts, particularly in the realm of data projects, require a combination of resilience, strategic thinking, and a willingness to adapt.

Data Syncing: The Evolution Of Data Integration

Data syncing, a crucial aspect of modern data management. It ensures data remains consistent and up-to-date across various sources, applications, and devices.