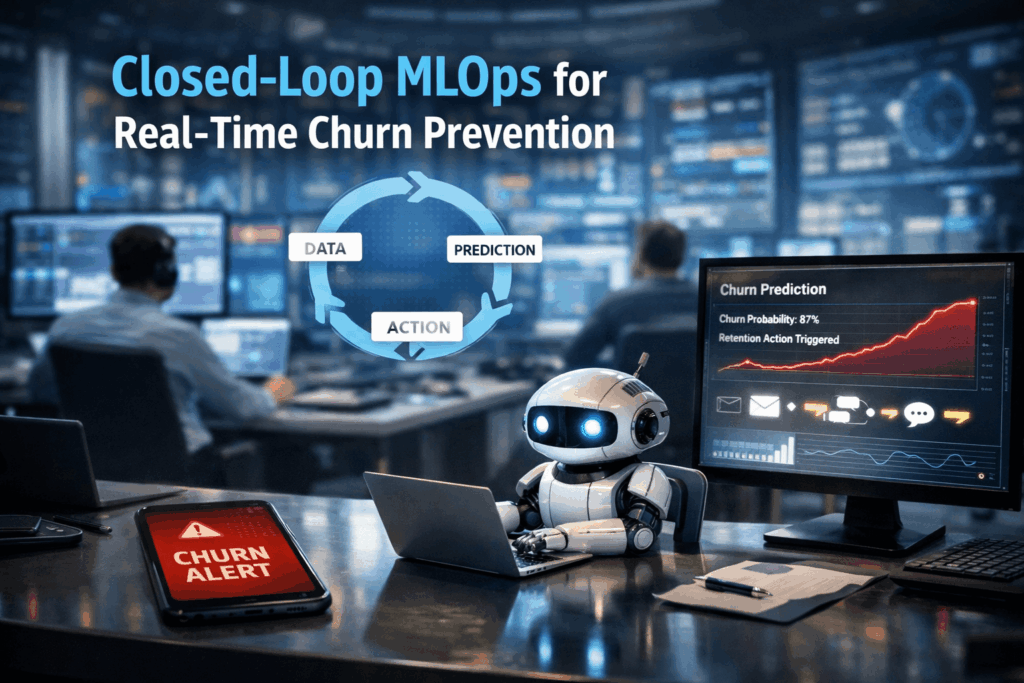

Churn Prevention: Building “closed-loop” MLOps systems that predict churn and trigger automated retention agents

🔗 Did you know? In the telecommunications and subscription-based sectors, a mere 5% increase in customer retention can lead to a staggering profit surge of more than 25%.

Closed-Loop MLOps

A “closed-loop” MLOps system is an advanced architectural pattern that transcends simple predictive analytics. While standard machine learning models might output a list of high-risk customers for a weekly review, a closed-loop system functions as an autonomous nervous system. It continuously ingests real-time data, calculates “fresh” behavioral features, generates churn probabilities, and crucially, triggers automated “retention agents” or downstream APIs to intervene instantly. It is the bridge between knowing a customer might leave and doing something about it before they do.

The Persistence of Churn Latency

Modern businesses are drowning in data but starving for timely action. The primary issue is data latency: the gap between a customer showing signs of dissatisfaction (such as decreased app login frequency or failed payment attempts) and the business responding. Traditional batch-processed pipelines often take 24–48 hours to refresh, by which time a competitor’s “welcome” email has already been opened.

Furthermore, engineering these systems often requires a fragmented tech stack: separate tools for ingestion, feature stores for serving, and complex custom code to trigger actions. This fragmentation leads to “training-serving skew,” where the logic used to train the model doesn’t match the live data, resulting in inaccurate predictions and wasted retention spend on the wrong customers.

How IOblend Solves the Loop

IOblend eliminates the friction of building these complex systems by providing a unified, production-grade DataOps and MLOps environment.

Real-Time Feature Engineering: IOblend acts as a “Feature Store without the Store.” It embeds feature engineering directly into your pipelines, allowing for sub-second freshness (P99 latency) without requiring separate infrastructure like Redis or Feast.

From Inference to Action: Beyond just serving features, IOblend allows you to capture model outputs and immediately generate AI agents or trigger automated actions.

Kappa Architecture at Scale: By utilizing a streaming-first Spark engine, IOblend handles over 1 million transactions per second. This allows you to monitor millions of customers simultaneously, ensuring no “silent” churn signal goes unnoticed.

Eliminating Tool Sprawl: With its low-code Designer and automated governance, IOblend replaces the need for disparate ETL tools, feature registries, and monitoring suites, keeping your entire retention loop inside your own secure environment.

Close the gap on customer loss and accelerate your retention intelligence with IOblend.

IOblend presents a ground-breaking approach to IoT and data integration, revolutionizing the way businesses handle their data. It’s an all-in-one data integration accelerator, boasting real-time, production-grade, managed Apache Spark™ data pipelines that can be set up in mere minutes. This facilitates a massive acceleration in data migration projects, whether from on-prem to cloud or between clouds, thanks to its low code/no code development and automated data management and governance.

IOblend also simplifies the integration of streaming and batch data through Kappa architecture, significantly boosting the efficiency of operational analytics and MLOps. Its system enables the robust and cost-effective delivery of both centralized and federated data architectures, with low latency and massively parallelized data processing, capable of handling over 10 million transactions per second. Additionally, IOblend integrates seamlessly with leading cloud services like Snowflake and Microsoft Azure, underscoring its versatility and broad applicability in various data environments.

At its core, IOblend is an end-to-end enterprise data integration solution built with DataOps capability. It stands out as a versatile ETL product for building and managing data estates with high-grade data flows. The platform powers operational analytics and AI initiatives, drastically reducing the costs and development efforts associated with data projects and data science ventures. It’s engineered to connect to any source, perform in-memory transformations of streaming and batch data, and direct the results to any destination with minimal effort.

IOblend’s use cases are diverse and impactful. It streams live data from factories to automated forecasting models and channels data from IoT sensors to real-time monitoring applications, enabling automated decision-making based on live inputs and historical statistics. Additionally, it handles the movement of production-grade streaming and batch data to and from cloud data warehouses and lakes, powers data exchanges, and feeds applications with data that adheres to complex business rules and governance policies.

The platform comprises two core components: the IOblend Designer and the IOblend Engine. The IOblend Designer is a desktop GUI used for designing, building, and testing data pipeline DAGs, producing metadata that describes the data pipelines. The IOblend Engine, the heart of the system, converts this metadata into Spark streaming jobs executed on any Spark cluster. Available in Developer and Enterprise suites, IOblend supports both local and remote engine operations, catering to a wide range of development and operational needs. It also facilitates collaborative development and pipeline versioning, making it a robust tool for modern data management and analytics

Streaming Data Quality That Won’t Break Pipelines

Streaming Without the Sting: Data Quality Rules That Never Break the Flow 💻 Did you know? A single minute of downtime in a high-velocity streaming environment can result in the loss of millions of data points, potentially costing a business thousands of pounds in missed opportunities or regulatory fines. — Defining Resilient Streaming Quality Data quality in

Schema Drift: The Silent Killer of Data Pipelines

The Silent Pipeline Killer: Surviving Schema Drift in the Wild 📊 Did you know? In the early days of big data, a single column change in a source database could trigger a “data graveyard” effect, where downstream analytics remained broken for weeks. The silent pipeline killer Schema drift occurs when the structure of source data changes

Preventing Data Drift in Modern Data Systems

The Invisible Erosion: Detecting and Managing Data Drift in Modern Architectures 📊 Did you know? According to recent industry surveys, over 70% of organisations experience significant data drift within the first six months of deploying a production system. The Concept of Data Drift Data drift occurs when the statistical properties or the underlying structure of incoming data change

Stream Database Changes to Your Lakehouse with CDC

Zero-Lag Operations: Stream Database Changes to Your Lakehouse 💾 Did you know? The “data downtime” caused by traditional batch processing costs the average enterprise approximately £12,000 per minute. The Concept: Moving at the Speed of Change Zero-lag operations rely on a transition from periodic “snapshots” to continuous “streams.” Instead of moving massive blocks of data at

Real-Time Salesforce CDC to Snowflake

Real-Time CDC: Keep Salesforce and Snowflake in Perfect Sync 🔎 Did you know? While many businesses still rely on nightly batch windows to move CRM data, Salesforce generates millions of events every hour. The Concept: Real-Time CDC Real-Time Change Data Capture (CDC) is a software design pattern used to determine and track data that has

Build Production Spark Pipelines—No Scala Needed

Democratising Spark: How IOblend enables Data Analysts to build production-grade Spark pipelines without writing Scala or Java Did You Know? The average enterprise now manages over 350 different data sources, yet nearly 70% of data leaders report feeling “trapped” by their own infrastructure. The Concept: Democratising the Spark Engine At its core, Apache Spark is a lightning-fast, distributed computing

Continuous Data Replication for DR and Continuity

Continuous Data Replication: for Business Continuity and DR 📝 Did you know? According to industry studies, the average cost of IT downtime is approximately £4,500 per minute. For a large enterprise, a single hour of data loss or system unavailability can translate into millions in lost revenue, legal penalties, and irreparable brand damage. The Pulse of

Smart Meter Data: Billing to Forecasting

Utilities: Smart Meter Data to Billing and Demand Forecasting 📋 Did You Know? The global roll-out of smart meters generates more data in a single day than most utility companies used to collect in an entire decade. While traditional meters were read once a month, or even once a quarter, smart meters transmit data at intervals

SCADA Streams to Reliability Analytics

Energy: SCADA Streams to Reliability Analytics 🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a

Building Live ETA Pipelines for Fleet Operations

Logistics: Live ETA Prediction Pipelines from Fleet + Orders 🚚 Did you know? The “Last Mile” is famously the most expensive and inefficient part of the supply chain, often accounting for up to 53% of total shipping costs. The Evolution of Real-Time Logistics Live ETA (Estimated Time of Arrival) prediction pipelines represent the shift from reactive

DB2 CDC to Lakehouse Without Re-Platforming

From DB2 to Lakehouse: Real-Time CDC Without Re-Platforming 💻 Did you know? Mainframe systems like DB2 still process approximately 30 billion business transactions every single day. Despite the rush toward modern cloud architectures, the world’s most critical financial and logistical data often resides in these “legacy” environments, making them the silent engines of the global economy.

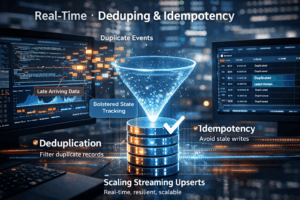

Real-Time Upserts: Deduping and Idempotency

Streaming Upserts Done Right: Deduping and Idempotency at Scale 💻 Did you know? In many high-velocity streaming environments, the “same” event can be sent or processed multiple times due to network retries or distributed system failures. The Art of the Upsert At its core, a streaming upsert (a portmanteau of “update” and “insert”) is the process of synchronising incoming data with an existing