Use Case

IOblend is gaining popularity in the murky world of data integration. From automating real-time factory data collection to ERP data synchronisation to multi-system integrations, IOblend covers a wide variety of use cases. Customers and implementation partners are discovering how our new technology can help them improve delivery timescales, lower implementation costs and increase project ROI. Let’s see the latest project made successful with IOblend.

Background

The client needed to make data from their main operational system available for immediate consumption by five downstream processes. The existing solution in place was costly to manage (several intermediate staging steps), required frequent manual interventions and did not support real-time processing (micro-batch only).

The challenge was to transform data into a common standard. It needed to be validated for quality and completeness. The solution had to be able to account for source system disruptions. In addition, the solution had to provide the full lineage for each data record to comply with strict audit requirements.

The infrastructure was already in place, so the key constraint was to make the solution compatible with AWS.

After a thorough market study, the client selected IOblend due to performance, flexibility, ease of use, and fast development time.

Objectives

The primary objectives for selecting IOblend were:

- Integration: The tool needed to integrate smoothly with the client’s existing AWS The client wanted to minimise disruption to the exiting integration process and downstream consumers.

- Manageability: The solution had to be easy to deploy, control, and configure within the client’s secure cloud environment.

- Flexibility: The solution had to be able to accommodate complex transformation logic, format changes, and stored procedures. Real-time processing was essential.

- Usability: The solution had to have a minimal learning curve, enabling quick setup and use by existing developers.

Implementation

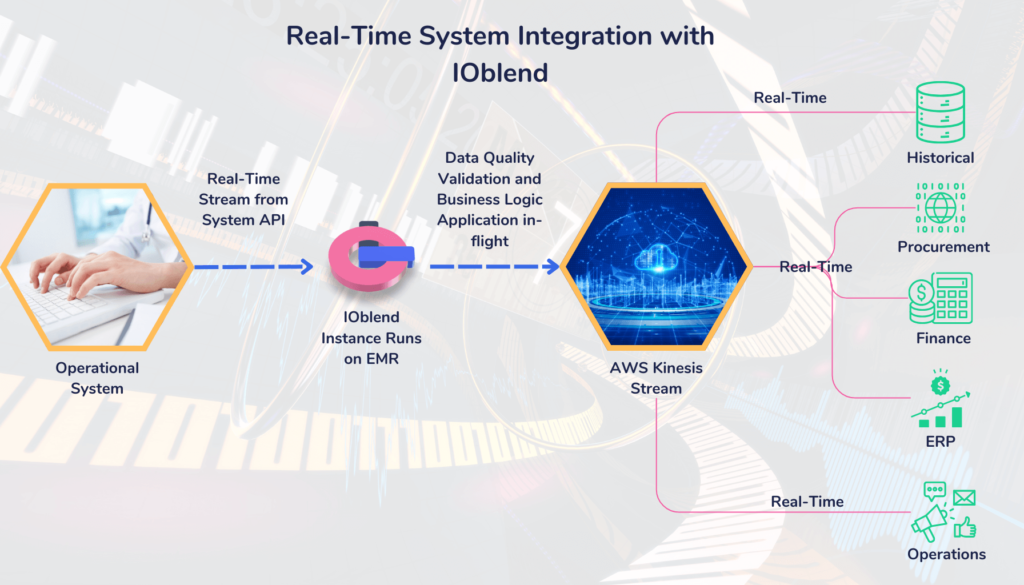

IOblend connected to the Ops system via an existing API. It replaced the AWS Glue batch (times polling) ingest with real-time, event-driven mechanism.

The next step was to transform data formats into an agreed standard. The existing architecture required a staging layer within AWS to convert the formats. IOblend performs such steps in memory, while the data is in-transit, thus reducing latency and removing a staging layer. The fetched data was transformed using custom Python scripts to fit the required formats of the analytical processes.

Data quality rules were also inserted into the pipeline based on acceptable thresholds. IOblend sends alerts to the developers and separates non-conforming data into a standalone table for completeness.

The transformed, curated data was deposited in AWS Kinesis Stream, acting as a queue for onward real-time and on-demand consumption. No further post-processing is required and no additional ETL or management technologies were used.

The pipeline was deployed on client’s AWS EMR cluster. IOblend automatically optimises and manages Spark compute.

IOblend was run in parallel with the existing process until the QA sign off. At which point IOblend seamlessly took over without any disruption to the downstream consumers.

Benefits

The integration of IOblend provided several benefits:

- Enhanced data processing: Enabled complex data transformations with minimal effort in real-time.

- Scalability: Automatically scalable to handle increased data volumes.

- Security: Ensured data security by operating within the client’s strictly controlled AWS environment.

- Flexibility: Effortlessly supported multiple data formats and transformation techniques using Python and SQL.

- Data quality: Enabled automated data curation, lineage, CDC, eventing, regressions, alerting and full logging.

- Cost-reduction: Removed the need for a data staging layer, minimised maintenance effort through automation and reduced ASW compute costs.

- Fast deployment: The pipeline went in-prod within five days from the project start, massively reducing development time and cost. The project was delivered by a single developer who has never worked with IOblend prior.

Conclusion

We believe in simplicity and versatility of data integration tools. This is why we created a “Swiss army knife” solution to allow you to perform production-grade data integration tasks with IOblend in a simple way. It can work with any infrastructure, existing business processes, security protocols and developer resources.

With IOblend, you will drastically reduce the cost of production data pipeline development, simplify your architecture and integrate any data securely across all systems.

This is what the data developer has said about IOblend in this project: “The adoption of IOblend significantly improved our data transformation capabilities, allowing for efficient and secure data integration between multiple systems. The flexible and user-friendly nature of IOblend facilitated rapid deployment and ease of management, meeting all the outlined objectives effectively.”

If you want to learn how IOblend can help you with data integration challenges, reach out to us. We can help you reduce cost and accelerate delivery of your data migrations, system synchronisations, IoT and real-time analytics, CRM and ERP integrations, plus lots more.

IOblend presents a ground-breaking approach to IoT and data integration, revolutionizing the way businesses handle their data. It’s an all-in-one data integration accelerator, boasting real-time, production-grade, managed Apache Spark™ data pipelines that can be set up in mere minutes. This facilitates a massive acceleration in data migration projects, whether from on-prem to cloud or between clouds, thanks to its low code/no code development and automated data management and governance.

IOblend also simplifies the integration of streaming and batch data through Kappa architecture, significantly boosting the efficiency of operational analytics and MLOps. Its system enables the robust and cost-effective delivery of both centralized and federated data architectures, with low latency and massively parallelized data processing, capable of handling over 10 million transactions per second. Additionally, IOblend integrates seamlessly with leading cloud services like Snowflake and Microsoft Azure, underscoring its versatility and broad applicability in various data environments.

At its core, IOblend is an end-to-end enterprise data integration solution built with DataOps capability. It stands out as a versatile ETL product for building and managing data estates with high-grade data flows. The platform powers operational analytics and AI initiatives, drastically reducing the costs and development efforts associated with data projects and data science ventures. It’s engineered to connect to any source, perform in-memory transformations of streaming and batch data, and direct the results to any destination with minimal effort.

IOblend’s use cases are diverse and impactful. It streams live data from factories to automated forecasting models and channels data from IoT sensors to real-time monitoring applications, enabling automated decision-making based on live inputs and historical statistics. Additionally, it handles the movement of production-grade streaming and batch data to and from cloud data warehouses and lakes, powers data exchanges, and feeds applications with data that adheres to complex business rules and governance policies.

The platform comprises two core components: the IOblend Designer and the IOblend Engine. The IOblend Designer is a desktop GUI used for designing, building, and testing data pipeline DAGs, producing metadata that describes the data pipelines. The IOblend Engine, the heart of the system, converts this metadata into Spark streaming jobs executed on any Spark cluster. Available in Developer and Enterprise suites, IOblend supports both local and remote engine operations, catering to a wide range of development and operational needs. It also facilitates collaborative development and pipeline versioning, making it a robust tool for modern data management and analytics

Preventing Data Drift in Modern Data Systems

The Invisible Erosion: Detecting and Managing Data Drift in Modern Architectures 📊 Did you know? According to recent industry surveys, over 70% of organisations experience significant data drift within the first six months of deploying a production system. The Concept of Data Drift Data drift occurs when the statistical properties or the underlying structure of incoming data change

Stream Database Changes to Your Lakehouse with CDC

Zero-Lag Operations: Stream Database Changes to Your Lakehouse 💾 Did you know? The “data downtime” caused by traditional batch processing costs the average enterprise approximately £12,000 per minute. The Concept: Moving at the Speed of Change Zero-lag operations rely on a transition from periodic “snapshots” to continuous “streams.” Instead of moving massive blocks of data at

Real-Time Salesforce CDC to Snowflake

Real-Time CDC: Keep Salesforce and Snowflake in Perfect Sync 🔎 Did you know? While many businesses still rely on nightly batch windows to move CRM data, Salesforce generates millions of events every hour. The Concept: Real-Time CDC Real-Time Change Data Capture (CDC) is a software design pattern used to determine and track data that has

Build Production Spark Pipelines—No Scala Needed

Democratising Spark: How IOblend enables Data Analysts to build production-grade Spark pipelines without writing Scala or Java Did You Know? The average enterprise now manages over 350 different data sources, yet nearly 70% of data leaders report feeling “trapped” by their own infrastructure. The Concept: Democratising the Spark Engine At its core, Apache Spark is a lightning-fast, distributed computing

IOblend vs Vendor Lock-In: Portable JSON + Python + SQL

The End of Vendor Lock-in: Keeping your logic portable with IOblend’s JSON-based playbooks and Python/SQL 💾 Did you know? The average enterprise now uses over 350 different data sources, yet nearly 70% of data leaders feel “trapped” by their infrastructure. Recent industry reports suggest that migrating a legacy data warehouse to a new provider can

IOblend JSON Playbooks: Keep Logic Portable, No Lock-In

The End of Vendor Lock-in: Keeping your logic portable with IOblend’s JSON-based playbooks and Python/SQL core 💾 Did you know? The average enterprise now uses over 350 different data sources, yet nearly 70% of data leaders feel “trapped” by their infrastructure. Recent industry reports suggest that migrating a legacy data warehouse to a new provider can