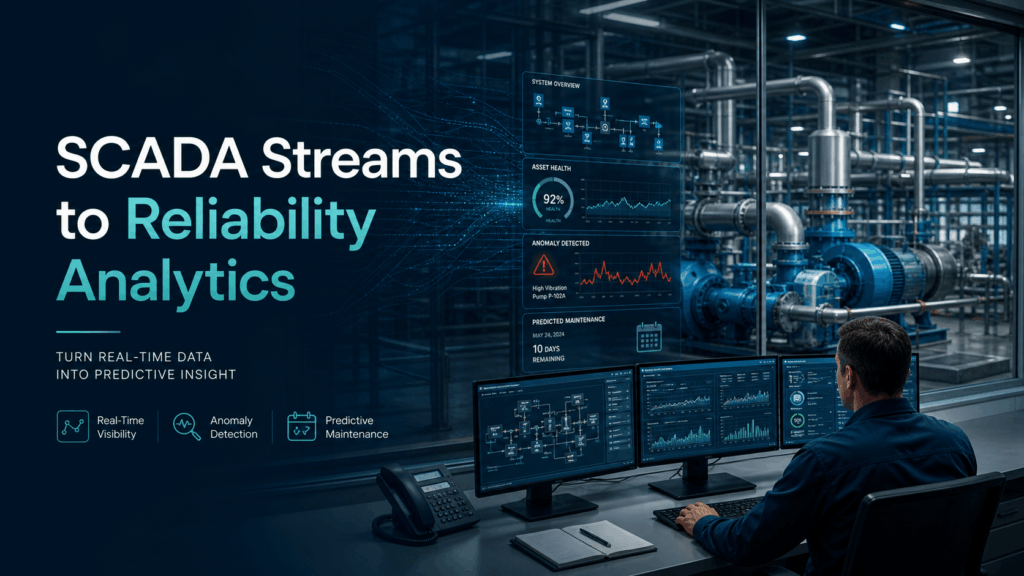

Energy: SCADA Streams to Reliability Analytics

🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a digital graveyard rather than a source of operational intelligence.

From Streams to Insights

At its core, the transition from SCADA (Supervisory Control and Data Acquisition) streams to reliability analytics is about turning raw telemetry into foresight. SCADA systems are excellent at real-time monitoring, telling you if a breaker is open or a turbine is spinning. Reliability analytics, however, looks at the “health” of the asset over time. It consumes high-frequency streams of temperature, vibration, and voltage to predict when a component will fail before it actually does, moving the industry from reactive repairs to proactive maintenance.

The Data Engineering Bottleneck

For most energy providers, the hurdle isn’t a lack of data, but the sheer complexity of moving it. SCADA data is notoriously difficult to work with; it often arrives in proprietary formats, via slow polling intervals, or through legacy protocols that don’t play well with modern cloud environments.

Data teams face “The Three Silos”:

- Format Fragmentation: Mixing time-series data from sensors with relational data from Enterprise Asset Management (EAM) systems.

- Latency Gaps: By the time a data engineer builds a manual pipeline to clean the SCADA noise, the “real-time” window for preventing a transformer blowout has already closed.

- Governance Debt: Ensuring that sensitive grid data remains encrypted and compliant while moving across pipelines is often a manual, error-prone process.

How IOblend Turns SCADA Data Into Operational Foresight

This is where IOblend changes the economics of reliability analytics. Most energy data projects do not fail because the analytics models are weak. They fail because the data arrives late, fragmented, poorly governed, or trapped inside legacy SCADA and operational systems.

IOblend removes that bottleneck by turning complex data engineering into reusable, controlled pipeline logic. Instead of spending months hand-coding fragile ETL jobs for every new asset, site, protocol, or system, teams can build governed, repeatable data flows that move SCADA telemetry, asset records, maintenance data, and operational events into analytics-ready environments faster.

- Real-time streams, not stale snapshots

IOblend supports real-time and event-driven ingestion, including CDC and streaming patterns, so reliability models can work from current operational signals rather than yesterday’s batch exports. That matters when the difference between prevention and failure is measured in minutes, not reporting cycles. - SCADA plus context, not SCADA in isolation

Raw sensor data alone rarely explains asset risk. IOblend helps combine high-frequency SCADA streams with enterprise data from EAM, ERP, maintenance logs, inspections, and operational systems, giving reliability teams the full context behind performance degradation, recurring faults, and early warning patterns. - Governed pipelines by design

Critical infrastructure data needs control, traceability, and security from the start. IOblend brings governance into the pipeline layer with validation, lineage, exception handling, and privacy-aware controls, so teams can scale analytics without creating a shadow estate of unmanaged scripts and risky data movement. - Built for change, not brittle integrations

Energy environments change constantly: new assets, new schemas, new sensors, new reporting requirements, and new analytics platforms. IOblend’s metadata-driven architecture makes pipeline logic reusable and easier to adapt, reducing the cost of change compared with hard-coded integrations. - Flexible across modern data platforms

Whether the target is Spark, Snowflake, Databricks, a lakehouse, warehouse, or operational analytics layer, IOblend provides a controlled framework for building and executing pipelines without locking teams into one rigid architecture.

The result is a faster path from SCADA visibility to reliability intelligence: fewer brittle pipelines, less manual engineering, stronger governance, and analytics teams that can focus on preventing failures instead of constantly repairing the data layer.

Ready to stop managing pipelines and start mastering reliability? Supercharge your energy data strategy with IOblend.

How Poor Data Integration Drains Productivity & Profits

How Poor Data Integration Drains Productivity & Profits Data is one of the most valuable assets a company can possess. We all know that (and if you still do not, god help you). Businesses rely on data to make informed decisions, optimise operations, drive customer engagement, etc. Data is everywhere and it’s waiting for us

How To Unlock Better Data Analytics with AI Agents

How To Unlock Better Data Analytics with AI Agents The new year brings with it new use cases. The speed with which the data industry evolves is incredible. It seems that the LLMs only appeared on the wider scene just a year ago. But we already have a plethora of exciting applications for it across

Why IOblend is Your Fast-Track to the Cloud

From Grounded to Clouded: Why IOblend is Your Fast-Track to the Cloud Today, we talk about data migration. Data migration these days mainly means moving to the cloud. Basically, if a business wants to drastically improve their data capabilities, they have to be on the cloud. Data migration is the mechanism that gets you there.

Data Integration Challenge: Can We Tame the Chaos?

The Triple Threats to Data Integration: High Costs, Long Timelines and Quality Pitfalls-can we tame the chaos? Businesses today work with a ton of data. As such, getting the sense of that data is more important than ever. Which then means, integrating it into a cohesive shape is a must. Data integration acts as a

Tangled in the Data Web

Tangled in the Data Web Data is now one of the most valuable assets for companies across all industries, right up there with their biggest asset – people. Whether you’re in retail, healthcare, or financial services, the ability to analyse data effectively gives a competitive edge. You’d think making the most of data would have

The ERP Shortcut: How to Integrate Faster Than You Think

IOblend was designed with one mission in mind: to simplify data integration. We deliver complex, real-time multi-system syncing with ERP in under a week.