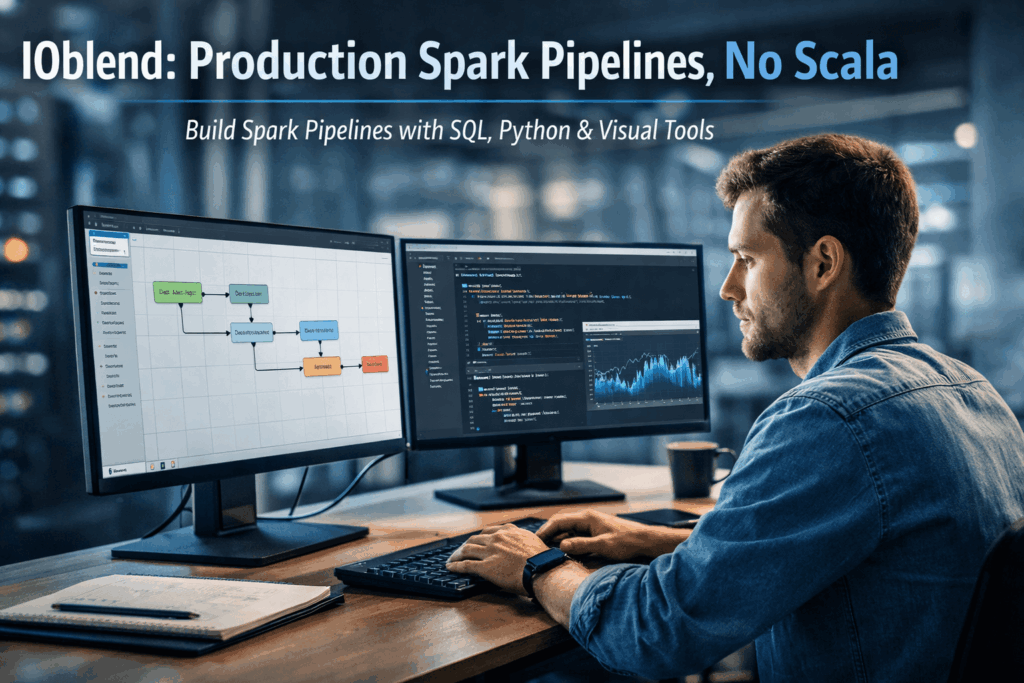

Democratising Spark: How IOblend enables Data Analysts to build production-grade Spark pipelines without writing Scala or Java

The Concept: Democratising the Spark Engine

At its core, Apache Spark is a lightning-fast, distributed computing framework capable of processing petabytes of data. However, for years, “production-grade” Spark was synonymous with complex software engineering.

IOblend changes this narrative by decoupling the power of Spark from the complexity of its code. It acts as a sophisticated abstraction layer, a managed Spark DataOps environment, that allows Data Analysts to build, deploy, and govern high-performance pipelines using only SQL, Python, or an intuitive drag-and-drop interface.

Why Businesses Struggle

For most organisations, the path from “data ingestion” to “actionable insight” is riddled with three primary obstacles:

- The Talent Gap: Expert Spark developers (fluent in Scala or Java) are rare and expensive. This creates a dependency where Analysts must wait months for Engineering teams to “productionise” a simple data model.

- Brittle Pipelines: Traditional hand-coded pipelines often lack built-in DataOps. Without automated error handling, record-level lineage, or schema drift detection, pipelines “fail quietly,” leading to untrustworthy reports.

- Real-Time Rigidity: Many legacy systems are built on batch processing. Transitioning to real-time streaming usually requires a complete architectural overhaul, often resulting in “vendor lock-in” to expensive cloud ecosystems.

The IOblend Solution: Production Power Without the Code

IOblend transforms these challenges into a streamlined, automated workflow. By utilising a Kappa-based architecture, it treats batch and streaming data with equal ease, allowing businesses to achieve 90% faster delivery of data products.

Key features that solve common business issues include:

- Visual Designer & Engine: Use a desktop GUI to design complex Directed Acyclic Graphs (DAGs). The IOblend Engine then converts these into efficient Spark jobs that run on any infrastructure, on-prem, cloud, or hybrid.

- In-built DataOps: Every pipeline automatically includes record-level lineage, Change Data Capture (CDC), and Slowly Changing Dimensions (SCD). You no longer need to “bolt-on” governance; it is baked into the metadata.

- Agentic AI Integration: Uniquely, IOblend allows you to embed AI agents directly into the ETL flow. You can validate, ground, and transform unstructured data before it even hits your warehouse.

- Zero Lock-in: Pipelines are stored as portable JSON playbooks. This ensures your business logic remains your own, easily versioned in standard repositories like Git.

It’s time to find your flow with IOblend.

Agentic AI ETL for Real-Time Sentiment Pricing

Sentiment-Driven Pricing: Using Agentic AI ETL to scrape social sentiment and adjust prices dynamically within the data flow 🤖 Did you know? A single viral tweet or a trending TikTok “dupe” video can alter the perceived value of a product by over 40% in less than six hours. Traditional pricing engines, which rely on historical sales

BCBS 239 Compliance with Record-Level Lineage

Regulatory Compliance at Scale: Automating record-level lineage and audit trails for BCBS 239 📋 Did you know? In the wake of the 2008 financial crisis, the Basel Committee found that many global banks were unable to aggregate risk exposures accurately or quickly because their data landscapes were too complex. This led to the birth of BCBS

Real-Time Churn Agents with Closed-Loop MLOps

Churn Prevention: Building “closed-loop” MLOps systems that predict churn and trigger automated retention agents 🔗 Did you know? In the telecommunications and subscription-based sectors, a mere 5% increase in customer retention can lead to a staggering profit surge of more than 25%. Closed-Loop MLOps A “closed-loop” MLOps system is an advanced architectural pattern that transcends simple predictive analytics. While

Streaming Predictive MX: Drift-Aware Inference

Predictive Maintenance 2.0: Feeding real-time sensor drifts directly into inference models using streaming engine 🔩 Did you know? The cost of unplanned downtime for industrial manufacturers is estimated at nearly £400 billion annually. Predictive Maintenance 2.0: The Real-Time Evolution Predictive Maintenance 2.0 represents a paradigm shift from batch-processed diagnostics to live, autonomous synchronisation. In the traditional 1.0

Beyond Micro-Batching: Continuous Streaming for AI

Beyond Micro-batching: Why Continuous Streaming Engine is the Future of “Fresh Data” for AI 💻 Did you know? Most modern “real-time” AI applications are actually running on data that is already several minutes old. Traditional micro-batching collects data into small chunks before processing it, introducing a “latency tax” that can render predictive models obsolete before they

ERP Cloud Migration With Live Data Sync

Seamless Core System Migration: The Move of Large-Scale Banking and Insurance ERP Data to a Modern Cloud Architecture ⛅ Did you know that core system migrations in large financial institutions, which typically rely on manual data mapping and validation, often require parallel runs lasting over 18 months? The Core Challenge The migration of multi-terabyte ERP and