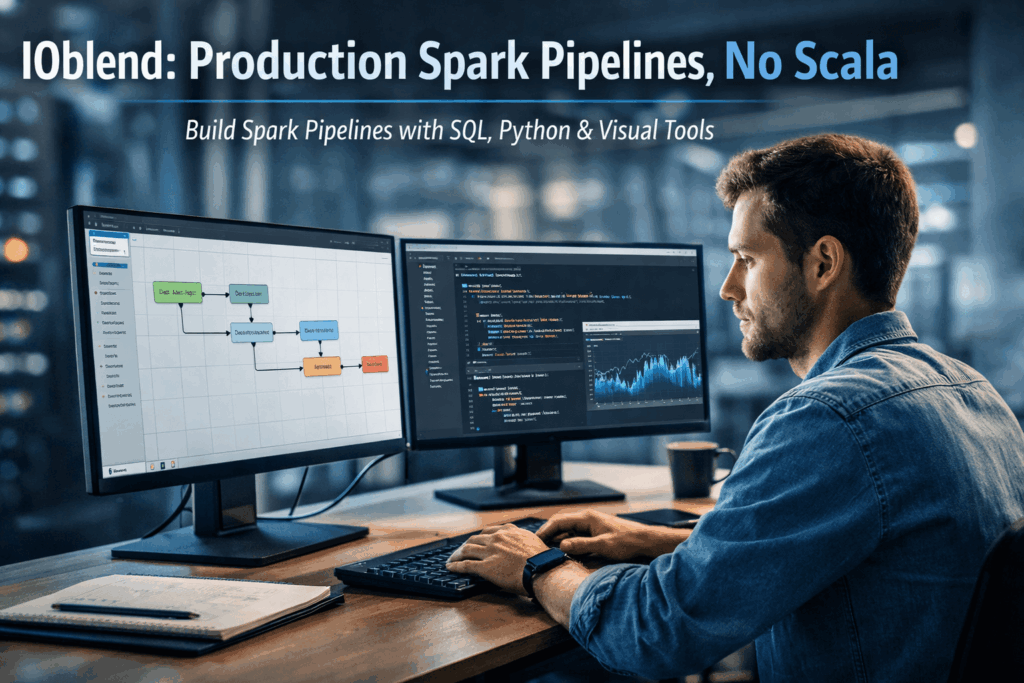

Democratising Spark: How IOblend enables Data Analysts to build production-grade Spark pipelines without writing Scala or Java

The Concept: Democratising the Spark Engine

At its core, Apache Spark is a lightning-fast, distributed computing framework capable of processing petabytes of data. However, for years, “production-grade” Spark was synonymous with complex software engineering.

IOblend changes this narrative by decoupling the power of Spark from the complexity of its code. It acts as a sophisticated abstraction layer, a managed Spark DataOps environment, that allows Data Analysts to build, deploy, and govern high-performance pipelines using only SQL, Python, or an intuitive drag-and-drop interface.

Why Businesses Struggle

For most organisations, the path from “data ingestion” to “actionable insight” is riddled with three primary obstacles:

- The Talent Gap: Expert Spark developers (fluent in Scala or Java) are rare and expensive. This creates a dependency where Analysts must wait months for Engineering teams to “productionise” a simple data model.

- Brittle Pipelines: Traditional hand-coded pipelines often lack built-in DataOps. Without automated error handling, record-level lineage, or schema drift detection, pipelines “fail quietly,” leading to untrustworthy reports.

- Real-Time Rigidity: Many legacy systems are built on batch processing. Transitioning to real-time streaming usually requires a complete architectural overhaul, often resulting in “vendor lock-in” to expensive cloud ecosystems.

The IOblend Solution: Production Power Without the Code

IOblend transforms these challenges into a streamlined, automated workflow. By utilising a Kappa-based architecture, it treats batch and streaming data with equal ease, allowing businesses to achieve 90% faster delivery of data products.

Key features that solve common business issues include:

- Visual Designer & Engine: Use a desktop GUI to design complex Directed Acyclic Graphs (DAGs). The IOblend Engine then converts these into efficient Spark jobs that run on any infrastructure, on-prem, cloud, or hybrid.

- In-built DataOps: Every pipeline automatically includes record-level lineage, Change Data Capture (CDC), and Slowly Changing Dimensions (SCD). You no longer need to “bolt-on” governance; it is baked into the metadata.

- Agentic AI Integration: Uniquely, IOblend allows you to embed AI agents directly into the ETL flow. You can validate, ground, and transform unstructured data before it even hits your warehouse.

- Zero Lock-in: Pipelines are stored as portable JSON playbooks. This ensures your business logic remains your own, easily versioned in standard repositories like Git.

It’s time to find your flow with IOblend.

Continuous Data Replication for DR and Continuity

Continuous Data Replication: for Business Continuity and DR 📝 Did you know? According to industry studies, the average cost of IT downtime is approximately £4,500 per minute. For a large enterprise, a single hour of data loss or system unavailability can translate into millions in lost revenue, legal penalties, and irreparable brand damage. The Pulse of

Smart Meter Data: Billing to Forecasting

Utilities: Smart Meter Data to Billing and Demand Forecasting 📋 Did You Know? The global roll-out of smart meters generates more data in a single day than most utility companies used to collect in an entire decade. While traditional meters were read once a month, or even once a quarter, smart meters transmit data at intervals

SCADA Streams to Reliability Analytics

Energy: SCADA Streams to Reliability Analytics 🔌 Did you know? The average modern wind turbine or smart substation generates roughly 1 to 2 terabytes of data every month. However, historically, less than 5% of that sensor data was actually used for decision-making. Most of it was simply discarded or “siloed” in SCADA systems, serving as a

Building Live ETA Pipelines for Fleet Operations

Logistics: Live ETA Prediction Pipelines from Fleet + Orders 🚚 Did you know? The “Last Mile” is famously the most expensive and inefficient part of the supply chain, often accounting for up to 53% of total shipping costs. The Evolution of Real-Time Logistics Live ETA (Estimated Time of Arrival) prediction pipelines represent the shift from reactive

DB2 CDC to Lakehouse Without Re-Platforming

From DB2 to Lakehouse: Real-Time CDC Without Re-Platforming 💻 Did you know? Mainframe systems like DB2 still process approximately 30 billion business transactions every single day. Despite the rush toward modern cloud architectures, the world’s most critical financial and logistical data often resides in these “legacy” environments, making them the silent engines of the global economy.

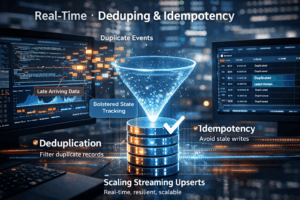

Real-Time Upserts: Deduping and Idempotency

Streaming Upserts Done Right: Deduping and Idempotency at Scale 💻 Did you know? In many high-velocity streaming environments, the “same” event can be sent or processed multiple times due to network retries or distributed system failures. The Art of the Upsert At its core, a streaming upsert (a portmanteau of “update” and “insert”) is the process of synchronising incoming data with an existing