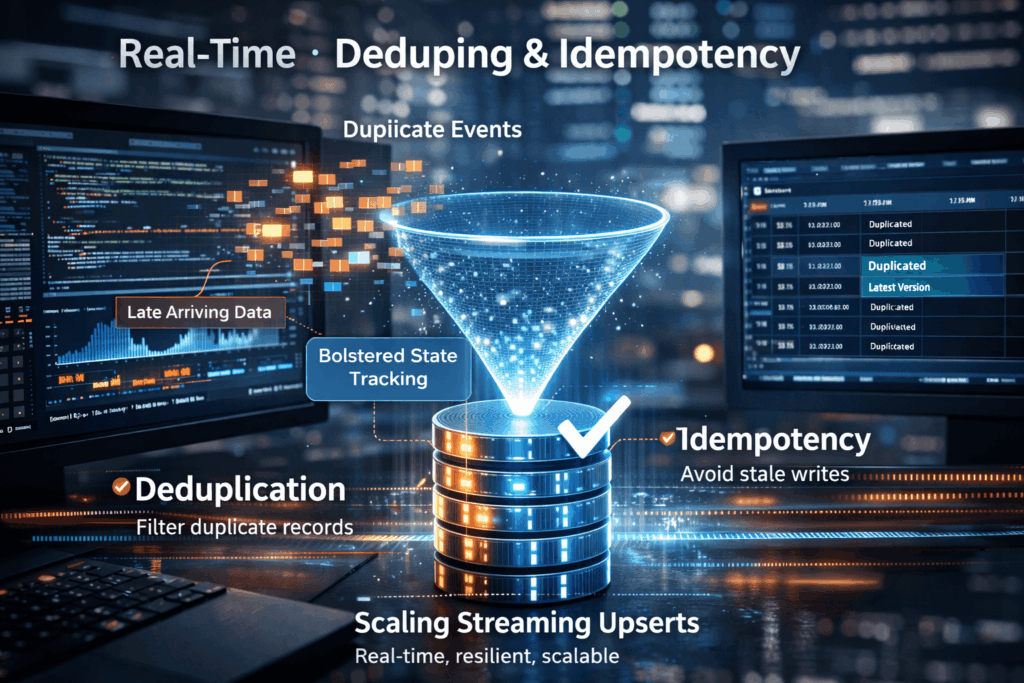

Streaming Upserts Done Right: Deduping and Idempotency at Scale

💻 Did you know? In many high-velocity streaming environments, the “same” event can be sent or processed multiple times due to network retries or distributed system failures.

The Art of the Upsert

At its core, a streaming upsert (a portmanteau of “update” and “insert”) is the process of synchronising incoming data with an existing dataset in real time. If a record with a specific primary key already exists, it is updated; if not, it is created.

To do this “right” at scale, two concepts are non-negotiable:

Deduplication: Removing identical redundant records before they hit the storage layer.

Idempotency: Ensuring that performing an operation multiple times has the same effect as performing it once.

The Scalability Wall: Why Businesses Struggle

Most businesses start with simple batch updates, but as they move toward real-time insights, they hit a wall. In a distributed stream (like Kafka or Kinesis), data rarely arrives in the correct order. This leads to several critical issues:

- Late-Arriving Data: An older version of a customer’s profile might arrive after a newer version. If the system blindly upserts, it “downgrades” the data to an incorrect, stale state.

- The “Double Bubble” Problem: During system spikes or restarts, producers often resend batches. Without a robust state store to track what has already been processed, the downstream database suffers from bloated storage and inaccurate analytics.

- Performance Bottlenecks: Checking for the existence of a record in a multi-terabyte table before every single write is computationally expensive. Traditional databases often crawl to a halt under the high-IOPS (Input/Output Operations Per Second) demand of a true streaming upsert.

Mastering the Stream with IOblend

IOblend solves the complexity of streaming upserts by shifting the heavy lifting away from the database and into a high-performance, “AI-Forward” data engineering tier.

Instead of writing complex, custom Spark or Flink scripts to manage state and watermarking, IOblend provides a unified interface to handle real-time data synchronisation. It natively manages:

- Automated Deduplication: Identifying and discarding redundant events at the ingestion point to save on downstream costs.

- Stateful Processing: Ensuring idempotency by keeping track of the latest version of every record, regardless of the order in which they arrive.

- Schema Evolution: Seamlessly handling changes in data structure without breaking the streaming pipeline.

By using IOblend’s advanced CDC (Change Data Capture) and streaming capabilities, businesses can move from fragile, “bolt-on” deduplication to a resilient, enterprise-grade data mesh that guarantees accuracy at any scale.

Don’t let duplicate data dilute your insights, streamline your future with IOblend.

Real-Time Churn Agents with Closed-Loop MLOps

Churn Prevention: Building “closed-loop” MLOps systems that predict churn and trigger automated retention agents 🔗 Did you know? In the telecommunications and subscription-based sectors, a mere 5% increase in customer retention can lead to a staggering profit surge of more than 25%. Closed-Loop MLOps A “closed-loop” MLOps system is an advanced architectural pattern that transcends simple predictive analytics. While

Streaming Predictive MX: Drift-Aware Inference

Predictive Maintenance 2.0: Feeding real-time sensor drifts directly into inference models using streaming engine 🔩 Did you know? The cost of unplanned downtime for industrial manufacturers is estimated at nearly £400 billion annually. Predictive Maintenance 2.0: The Real-Time Evolution Predictive Maintenance 2.0 represents a paradigm shift from batch-processed diagnostics to live, autonomous synchronisation. In the traditional 1.0

Beyond Micro-Batching: Continuous Streaming for AI

Beyond Micro-batching: Why Continuous Streaming Engine is the Future of “Fresh Data” for AI 💻 Did you know? Most modern “real-time” AI applications are actually running on data that is already several minutes old. Traditional micro-batching collects data into small chunks before processing it, introducing a “latency tax” that can render predictive models obsolete before they

ERP Cloud Migration With Live Data Sync

Seamless Core System Migration: The Move of Large-Scale Banking and Insurance ERP Data to a Modern Cloud Architecture ⛅ Did you know that core system migrations in large financial institutions, which typically rely on manual data mapping and validation, often require parallel runs lasting over 18 months? The Core Challenge The migration of multi-terabyte ERP and

Legacy ERP Integration to Modern Data Fabric

Warehouse Automation Efficiency: Migrating and Integrating Legacy ERP Data into a Modern Big Data Ecosystem 📦 Did you know? Analysts estimate that warehouses leveraging robust, real-time data integration see inventory accuracy improvements of up to 99%. The Convergence of WMS and Big Data Data professionals in logistics face a profound challenge extracting mission-critical operational data such

Dynamic Pricing with Agentic AI

The Agentic Edge: Real-Time Dynamic Pricing through AI-Driven Cloud Data Integration 📊 Did You Know? The most sophisticated dynamic pricing systems can process and react to market signals in under 100 milliseconds. The Evolution of Value Optimisation Dynamic Pricing and Revenue Management (DPRM) is a complex computational science. At its core, DPRM aims to sell the right